Wednesday, July 16, 2014

To crawl, or not to crawl, that is the robots.txt question.

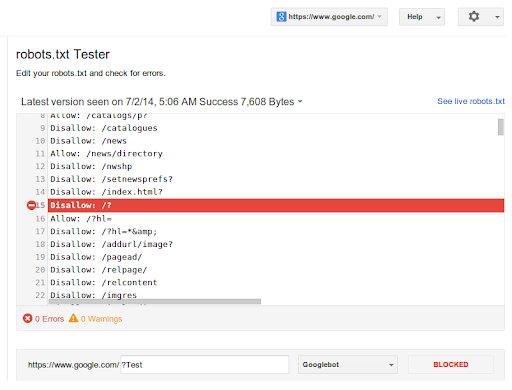

Making and maintaining correct robots.txt files can sometimes be difficult. While most sites have it easy (tip: they often don't even need a robots.txt file!), finding the rules within a large robots.txt file that are or were blocking individual URLs can be quite tricky. To make that easier, we're now announcing an updated robots.txt testing tool in Webmaster Tools.

You can find the updated testing tool in Webmaster Tools within the Crawl section:

Here you'll see the current robots.txt file, and can test new URLs to see whether they're disallowed for crawling. To guide your way through complicated rules, it will highlight the specific one that led to the final decision. You can make changes in the file and test those too, you'll just need to upload the new version of the file to your server afterwards to make the changes take effect. Our developers site has more about robots.txt rules and how the files are processed.

Additionally, you'll be able to review older versions of your robots.txt file, and see when access

issues block us from crawling. For example, if Googlebot sees a 500 server error for

the robots.txt file, we'll generally pause further crawling of the website.

Since there may be some errors or warnings shown for your existing sites, we recommend double-checking their robots.txt files. You can also combine it with other parts of Webmaster Tools: for example, you might use the updated Fetch as Google tool to render important pages on your website. If any blocked URLs are reported, you can use this robots.txt tester to find the rule that's blocking them, and, of course, then improve that. A common problem we've seen comes from old robots.txt files that block CSS, JavaScript, or mobile content—fixing that is often trivial once you've seen it.

We hope this updated tool makes it easier for you to test and maintain the robots.txt file. Should you have any questions, or need help with crafting a good set of rules, you can drop by our webmaster's help forum!