Page Summary

-

The Lighting Estimation API helps create realistic AR experiences by analyzing images to provide detailed information about the lighting in a scene.

-

The API provides data about various lighting cues including shadows, ambient light, shading, specular highlights, and reflections.

-

Environmental HDR mode offers granular lighting estimation through a main directional light, ambient spherical harmonics, and an HDR cubemap for realistic rendering.

-

Ambient Intensity mode provides coarse lighting estimation, determining average pixel intensity and color correction scalars for simpler use cases.

Platform-specific guides

Android (Kotlin/Java)

Android NDK (C)

Unity (AR Foundation)

Unreal Engine

A key part for creating realistic AR experiences is getting the lighting right. When a virtual object is missing a shadow or has a shiny material that doesn't reflect the surrounding space, users can sense that the object doesn't quite fit, even if they can't explain why. This is because humans subconsciously perceive cues regarding how objects are lit in their environment. The Lighting Estimation API analyzes given images for such cues, providing detailed information about the lighting in a scene. You can then use this information when rendering virtual objects to light them under the same conditions as the scene they're placed in, keeping users grounded and engaged.

Lighting cues

The Lighting Estimation API provides detailed data that lets you mimic various lighting cues when rendering virtual objects. These cues are shadows, ambient light, shading, specular highlights, and reflections.

Shadows

Shadows are often directional and tell viewers where light sources are coming from.

Ambient light

Ambient light is the overall diffuse light that comes in from around the environment, making everything visible.

Shading

Shading is the intensity of the light. For example, different parts of the same object can have different levels of shading in the same scene, depending on angle relative to the viewer and its proximity to a light source.

Specular highlights

Specular highlights are the shiny bits of surfaces that reflect a light source directly. Highlights on an object change relative to the position of a viewer in a scene.

Reflections

Light bounces off of surfaces differently depending on whether the surface has specular (highly reflective) or diffuse (not reflective) properties. For example, a metallic ball will be highly specular and reflect its environment, while another ball painted a dull matte gray will be diffuse. Most real-world objects have a combination of these properties — think of a scuffed-up bowling ball or a well-used credit card.

Reflective surfaces also pick up colors from the ambient environment. The coloring of an object can be directly affected by the coloring of its environment. For example, a white ball in a blue room will take on a bluish hue.

Environmental HDR mode

These modes consist of separate APIs that allow for granular and realistic lighting estimation for directional lighting, shadows, specular highlights, and reflections.

Environmental HDR mode uses machine learning to analyze the camera images in real time and synthesize environmental lighting to support realistic rendering of virtual objects.

This lighting estimation mode provides:

Main directional light. Represents the main light source. Can be used to cast shadows.

Ambient spherical harmonics. Represents the remaining ambient light energy in the scene.

An HDR cubemap. Can be used to render reflections in shiny metallic objects.

You can use these APIs in different combinations, but they're designed to be used together for the most realistic effect.

Main directional light

The main directional light API calculates the direction and intensity of the scene's main light source. This information allows virtual objects in your scene to show reasonably positioned specular highlights, and to cast shadows in a direction consistent with other visible real objects.

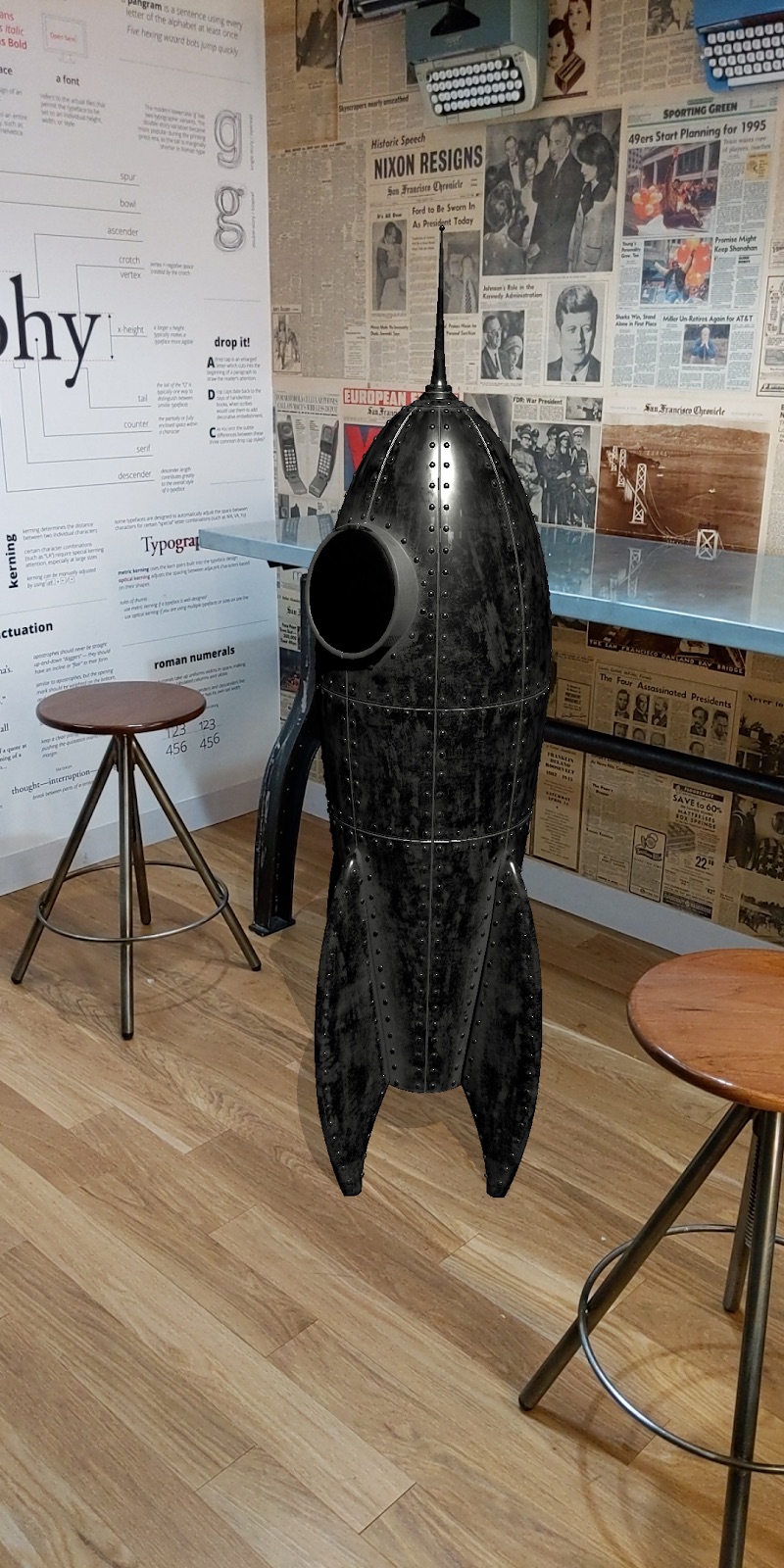

To see how this works, consider these two images of the same virtual rocket. In the image on the left, there's a shadow under the rocket but its direction doesn't match the other shadows in the scene. In the rocket on the right, the shadow points in the correct direction. It's a subtle but important difference, and it grounds the rocket in the scene because the direction and intensity of the shadow better match other shadows in the scene.

When the main light source or a lit object is in motion, the specular highlight on the object adjusts its position in real time relative to the light source.

Directional shadows also adjust their length and direction relative to the position of the main light source, just as they do in the real world. To illustrate this effect, consider these two mannequins, one virtual and the other real. The mannequin on the left is the virtual one.

Ambient spherical harmonics

In addition to the light energy in the main directional light, ARCore provides spherical harmonics, representing the overall ambient light coming in from all directions in the scene. Use this information during rendering to add subtle cues that bring out the definition of virtual objects.

Consider these two images of the same rocket model. The rocket on the left is rendered using lighting estimation information detected by the main directional light API. The rocket on the right is rendered using information detected by both the main direction light and ambient spherical harmonics APIs. The second rocket clearly has more visual definition, and blends more seamlessly into the scene.

HDR cubemap

Use the HDR cubemap to render realistic reflections on virtual objects with medium to high glossiness, such as shiny metallic surfaces. The cubemap also affects the shading and appearance of objects. For example, the material of a specular object surrounded by a blue environment will reflect blue hues. Calculating the HDR cubemap requires a small amount of additional CPU computation.

Whether you should use the HDR cubemap depends on how an object reflects its surroundings. Because the virtual rocket is metallic, it has a strong specular component that directly reflects the environment around it. Thus, it benefits from the cubemap. On the other hand, a virtual object with a dull gray matte material doesn't have a specular component at all. Its color primarily depends on the diffuse component, and it wouldn't benefit from a cubemap.

All three Environmental HDR APIs were used to render the rocket below. The HDR cubemap enables the reflective cues and further highlighting that ground the object fully in the scene.

Here is the same rocket model in differently lit environments. All of these scenes were rendered using information from the three APIs, with directional shadows applied.

Ambient Intensity mode

Ambient Intensity mode determines the average pixel intensity and the color correction scalars for a given image. It's a coarse setting designed for use cases where precise lighting is not critical, such as objects that have baked-in lighting.

Pixel intensity

Captures the average pixel intensity of the lighting in a scene. You can apply this lighting to a whole virtual object.

Color

Detects the white balance for each individual frame. You can then color correct a virtual object so that it integrates more smoothly into the overall coloring of the scene.

Environment probes

Environment probes organize 360-degree camera views into environment textures such as cube maps. These textures can then be used to realistically light virtual objects, such as a virtual metal ball that “reflects” the room it’s in.