Page Summary

-

The Scene Semantics API provides real-time, ML model-based semantic information about the scene surrounding the user in outdoor environments.

-

The API returns a semantic image with labels for each pixel across various classes, a confidence image showing the probability for each label, and allows querying the fraction of pixels for a specific label.

-

Before using the API, you need to understand fundamental AR concepts, configure an ARCore session, and check if the user's device supports Scene Semantics.

-

To use Scene Semantics, you must explicitly enable semantic mode in your ARCore session configuration.

-

Semantic and confidence images become available a few frames after the session starts, and you can retrieve them using

GARFrame.semanticImageandGARFrame.semanticConfidenceImage.

Learn how to use the Scene Semantics API in your own apps.

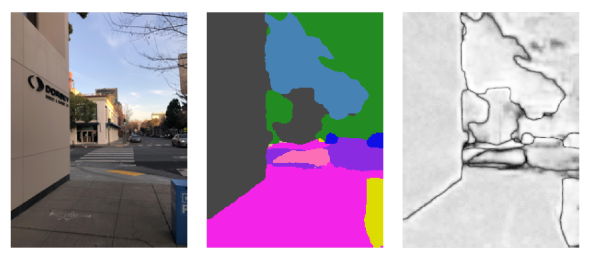

The Scene Semantics API enables developers to understand the scene surrounding the user, by providing ML model-based, real-time semantic information. Given an image of an outdoor scene, the API returns a label for each pixel across a set of useful semantic classes, such a sky, building, tree, road, sidewalk, vehicle, person, and more. In addition to pixel labels, the Scene Semantics API also offers confidence values for each pixel label and an easy-to-use way to query the prevalence of a given label in an outdoor scene.

From left to right, examples of an input image, the semantic image of pixel labels, and the corresponding confidence image:

Prerequisites

Make sure that you understand fundamental AR concepts and how to configure an ARCore session before proceeding.

Enable Scene Semantics

In a new ARCore session, check whether a user's device supports the Scene Semantics API. Not all ARCore-compatible devices support the Scene Semantics API due to processing power constraints.

To save resources, Scene Semantics is disabled by default on ARCore. Enable semantic mode to have your app use the Scene Semantics API.

GARSessionConfiguration *configuration = [[GARSessionConfiguration alloc] init];

if ([self.garSession isSemanticModeSupported:GARSemanticModeEnabled]) {

configuration.semanticMode = GARSemanticModeEnabled;

}

NSError *error;

[self.garSession setConfiguration:configuration error:&error];

Obtain the semantic image

Once Scene Semantics is enabled, the semantic image can be retrieved. The semantic image is a kCVPixelFormatType_OneComponent8 image, where each pixel corresponds to a semantic label defined by GARSemanticLabel.

Use GARFrame.semanticImage to acquire the semantic image:

CVPixelBuffer semanticImage = garFrame.semanticImage;

if (semanticImage) {

// Use the semantic image here

} else {

// Semantic images are not available.

// The output image may be missing for the first couple frames before the model has had a

// chance to run yet.

}

Output semantic images should be available after about 1-3 frames from the start of the session, depending on the device.

Obtain the confidence image

In addition to the semantic image, which provides a label for each pixel, the API also provides a confidence image of corresponding pixel confidence values. The confidence image is a kCVPixelFormatType_OneComponent8 image, where each pixel corresponds to a value in the range [0, 255], corresponding to the probability associated with the semantic label for each pixel.

Use GARFrame.semanticConfidenceImage to acquire the semantic confidence image:

CVPixelBuffer confidenceImage = garFrame.semanticConfidenceImage;

if (confidenceImage) {

// Use the semantic image here

} else {

// Semantic images are not available.

// The output image may be missing for the first couple frames before the model has had a

// chance to run yet.

}

Output confidence images should be available after about 1-3 frames from the start of the session, depending on the device.

Query the fraction of pixels for a semantic label

You can also query the fraction of pixels in the current frame that belong to a particular class, such as sky. This query is more efficient than returning the semantic image and performing a pixel-wise search for a specific label. The returned fraction is a float value in the range [0.0, 1.0].

Use fractionForSemanticLabel: to acquire the fraction for a given label:

// Ensure that semantic data is present for the GARFrame.

if (garFrame.semanticImage) {

float fraction = [garFrame fractionForSemanticLabel:GARSemanticLabelSky];

}