互動式畫布 Canvas 是 Google 助理建構的架構,可讓開發人員為視覺動作新增身歷其境的沉浸式體驗。這項視覺體驗是 Google 助理為了回應對話中的使用者而傳送的互動式網頁應用程式。有別於 Google 助理對話中內嵌的複合式回應,互動式 Canvas 網頁應用程式會以全螢幕網頁檢視畫面的形式呈現。

如果想在動作中執行下列任一操作,請使用互動式畫布:

- 製作全螢幕視覺效果

- 建立自訂動畫和轉場效果

- 視覺化呈現資料

- 建立自訂版面配置和 GUI

支援的裝置

互動式裝置目前支援下列裝置:

- 智慧螢幕

- Android 行動裝置 (不含平板電腦)

運作方式

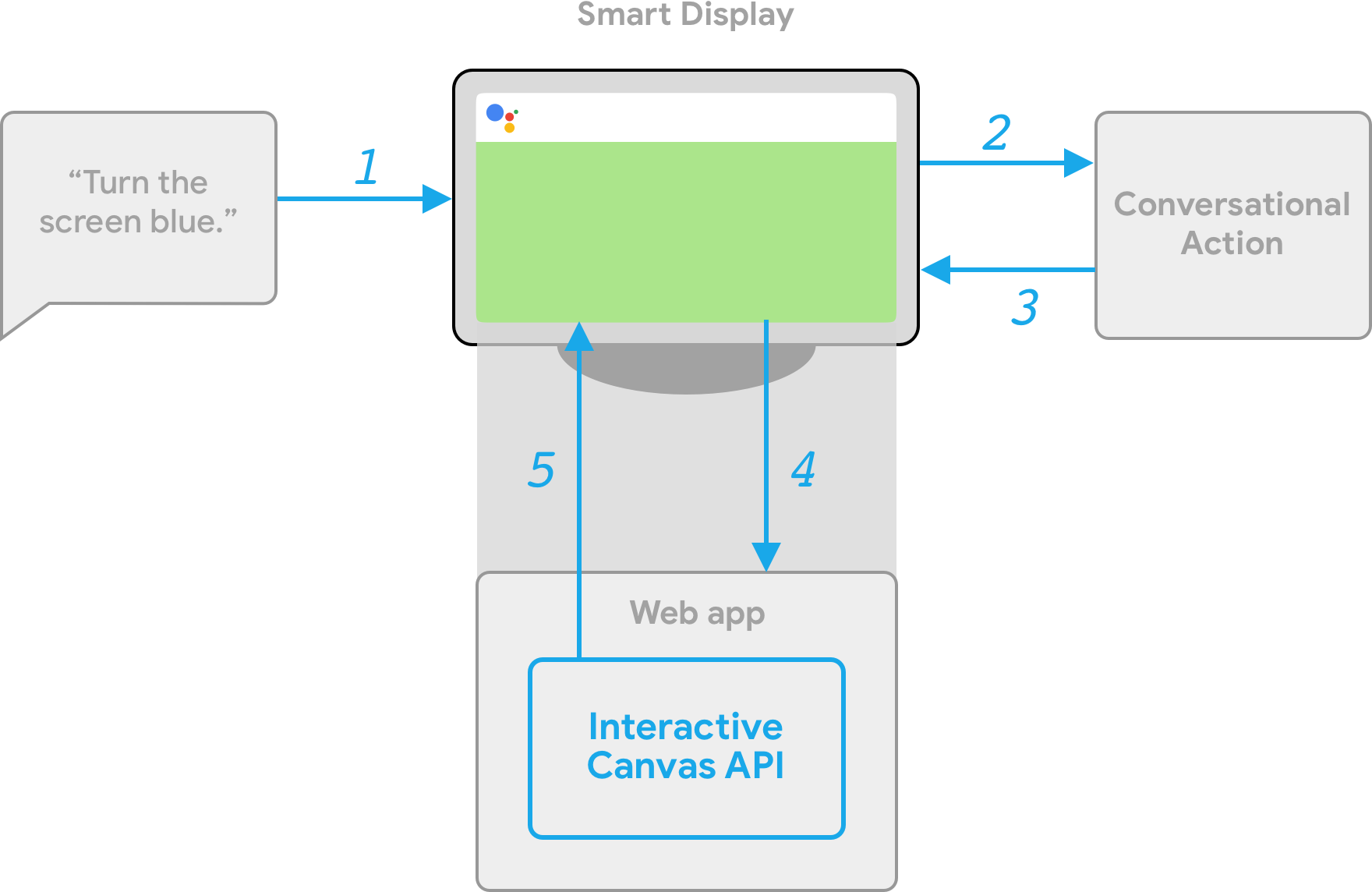

使用互動式畫布的動作包含兩個主要元件:

- 對話動作:使用對話介面執行使用者要求的動作。您可以使用 Actions Builder 或 Actions SDK 建構對話。

- 網頁應用程式:具有自訂視覺功能的前端網頁應用程式,你的動作會在對話期間回應使用者。您可使用 HTML、JavaScript 和 CSS 等網頁技術建構網頁應用程式。

與互動式 Canvas Action 互動的使用者可透過 Google 助理進行來回對話,以達成目標。不過,如果是互動式畫布,這個對話的回覆是在網頁應用程式的情境中運作。將對話動作連結至網頁應用程式時,您必須在網頁應用程式程式碼中加入 Interactive Canvas API。

- 互動式畫布程式庫:加入網頁應用程式的 JavaScript 程式庫,可使用 API 啟用網頁應用程式和對話動作之間的通訊。詳情請參閱 Interactive Canvas API 說明文件。

除了包含互動式畫布程式庫外,您也必須在對話中傳回 Canvas 回應類型,才能在使用者的裝置上開啟網頁應用程式。您也可以使用 Canvas 回應,根據使用者輸入內容更新網頁應用程式。

Canvas:回應內含網頁應用程式和資料的網址。Actions Builder 可以自動填入相符的意圖和目前場景資料,以更新Canvas回應。或者,您也可以使用 Node.js 執行要求程式庫,從 Webhook 傳送Canvas回應。詳情請參閱「Canvas 提示」。

為了說明互動式畫布的運作方式,請參考名為 Cool Colors 的假設動作,將裝置螢幕的顏色變更為使用者指定的顏色。使用者叫用動作後,系統會執行以下流程:

- 使用者說出 Google 助理裝置「將螢幕變成藍色」。

- Actions on Google 平台會將使用者的要求轉送至對話邏輯,以便比對意圖。

- 平台會比對意圖和動作的情境,進而觸發事件並將

Canvas回應傳送至裝置。裝置會使用回應中提供的網址載入網頁應用程式 (如果尚未載入)。 - 網頁應用程式載入時,即會透過 Interactive Canvas API 註冊回呼。

如果 Canvas 回應包含

data欄位,data欄位的參數值會傳送至網頁應用程式的註冊onUpdate回呼。在這個範例中,對話邏輯會傳送Canvas回應,當中包含資料欄位,且變數值為blue。 - 收到

Canvas回應的data值時,onUpdate回呼能夠為網頁應用程式執行自訂邏輯,並做出已定義的變更。在這個範例中,onUpdate回呼會讀取data的顏色,並將畫面變為藍色。

用戶端和伺服器端執行要求

建構互動式 Canvas 動作時,您可以選擇兩種執行要求路徑:伺服器執行要求或用戶端執行要求。如果是伺服器執行要求,主要使用需要 Webhook 的 API。您可以透過用戶端執行要求使用用戶端 API,並視需要在非 Canvas 功能 (例如帳戶連結) 中使用 Webhook,

如果選擇在專案建立階段透過伺服器 Webhook 執行要求進行建構,您必須部署 Webhook 來處理對話邏輯和用戶端 JavaScript 以更新網頁應用程式,以及管理兩個應用程式之間的通訊。

如果您選擇使用用戶端執行要求 (目前適用於開發人員預覽版),可以使用新的用戶端 API 在網頁應用程式中建構動作的邏輯,藉此簡化開發體驗、減少對話切換之間的延遲時間,並讓您使用裝置端功能。您也可以視需要從用戶端切換至伺服器端邏輯。

如要進一步瞭解用戶端功能,請參閱「使用用戶端執行要求建構」。

後續步驟

如要瞭解如何為互動式畫佈建構網頁應用程式,請參閱網頁應用程式。

如要查看完整的互動式 Canvas Action 的程式碼,請參閱 GitHub 範例。