Page Summary

-

Media responses in Google Actions allow for audio playback longer than the 240-second SSML limit and work on both audio-only and visual devices.

-

Media responses should use a candidate with both the

RICH_RESPONSEandLONG_FORM_AUDIOsurface capabilities and audio must be in a publicly accessible MP3 format via HTTPS. -

Media responses include a single-track card with visual controls for playback and progress, and also support voice controls handled by Google Assistant.

-

Media status events like

FINISHED,PAUSED,STOPPED, andFAILEDare generated by Google Assistant to inform your Action of playback progress, which you handle in your webhook code. -

You can create playlists or implement looping mode by including multiple

MediaObjectitems or setting therepeat_modeproperty toALL.

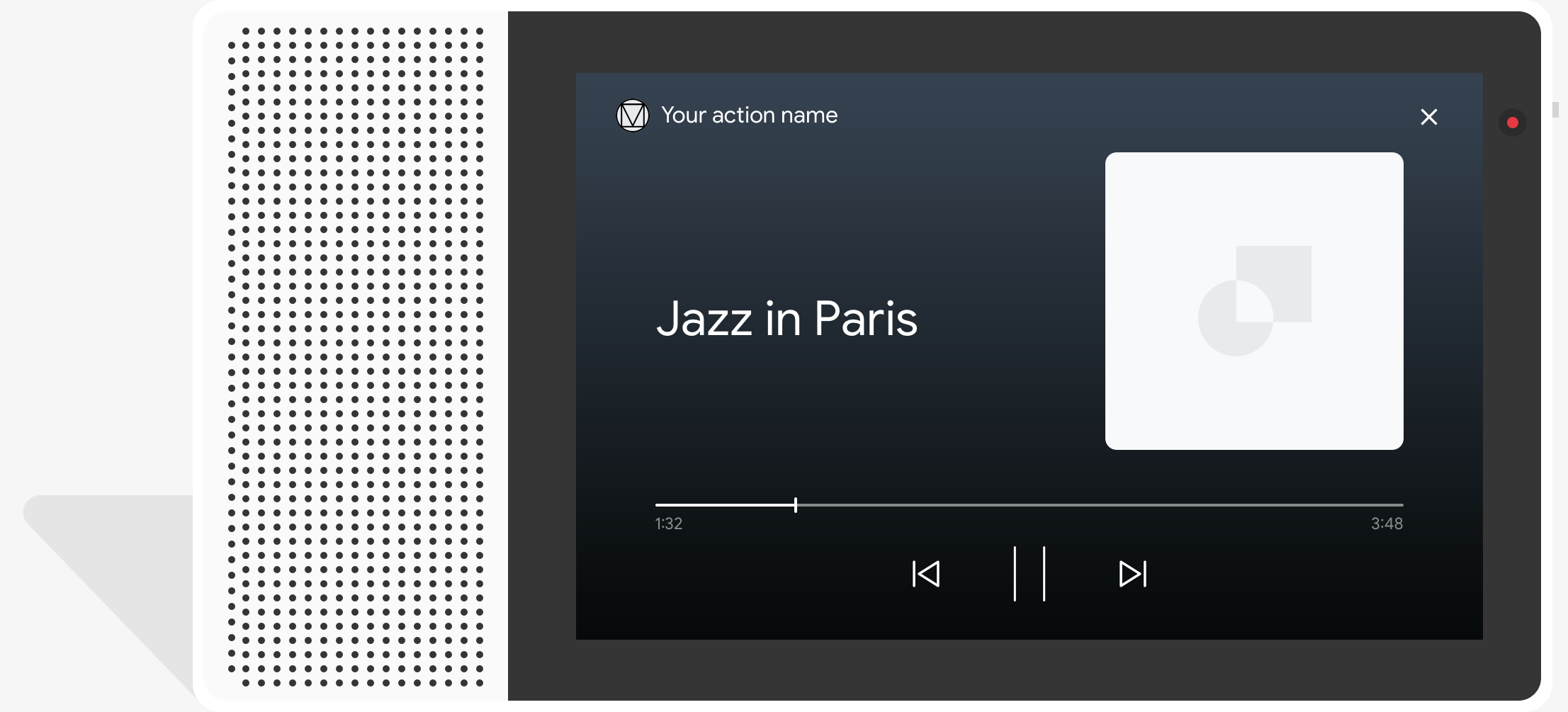

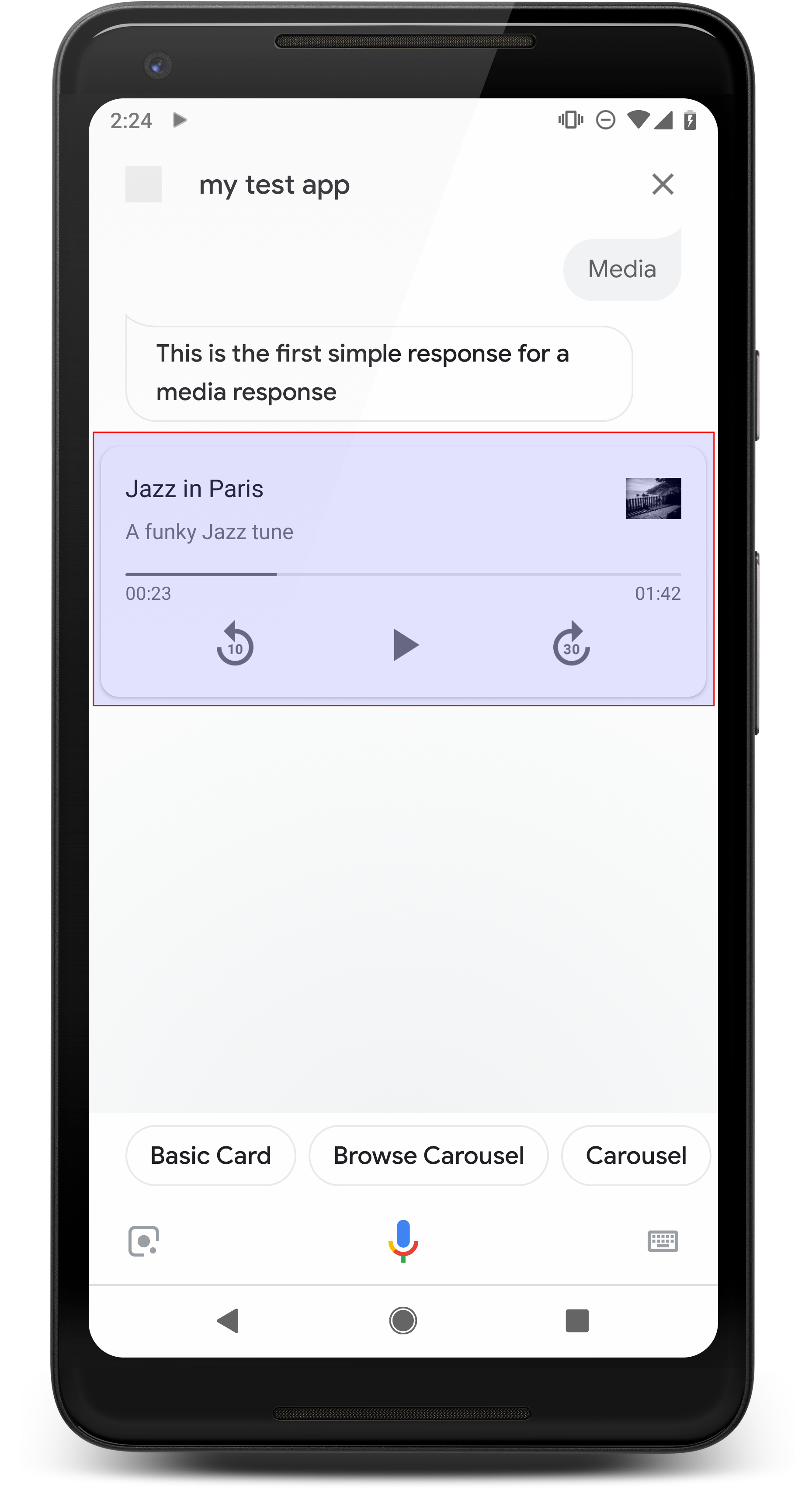

Media responses let your Actions play audio content with a playback duration longer than the 240-second limit of SSML. Media responses work on both audio-only devices and devices that can display visual content. On a display, media responses are accompanied by a visual component with media controls and (optionally) a still image.

When defining a media response, use a candidate with both the

RICH_RESPONSE and LONG_FORM_AUDIO surface capabilities so that Google

Assistant only returns the rich response on supported devices. You can only use

one rich response per content object in a prompt.

Audio for playback must be in a correctly formatted MP3 file. MP3 files must be hosted on a web server and be publicly available through an HTTPS URL. Live streaming is only supported for the MP3 format.

Behavior

The primary component of a media response is the single-track card. The card allows the user to do the following:

- Replay the last 10 seconds

- Skip forward 30 seconds

- View the total length of the media content

- View a progress indicator for media playback

- View the elapsed playback time

Media responses support the following audio controls for voice interactions, all of which are handled by Google Assistant:

- "Ok Google, play.”

- “Ok Google, pause.”

- “Ok Google, stop.”

- “Ok Google, start over.”

Users can also control the volume by saying phrases like, "Hey Google, turn the volume up." or "Hey Google, set the volume to 50 percent." Intents in your Action take precedence if they handle similar training phrases. Let Assistant handle these user requests unless your Action has a specific reason to.

Behavior on Android phones

On Android phones, media controls are also available while the phone is locked. Media controls also appear in the notification area, and users can see media responses when any of the following conditions are met:

- Google Assistant is in the foreground, and the phone screen is on.

- The user leaves Google Assistant while audio is playing and returns to Google Assistant within 10 minutes of playback completion. On returning to Google Assistant, the user sees the media card and suggestion chips.

Properties

Media responses have the following properties:

| Property | Type | Requirement | Description |

|---|---|---|---|

media_type |

MediaType |

Required | Media type of the provided response. Return MEDIA_STATUS_ACK

when acknowledging a media status. |

start_offset |

string | Optional | Seek position to start the first media track. Provide the value in seconds, with fractional seconds expressed at no more than nine decimal places, and end in the suffix "s". For example, 3 seconds and 1 nanosecond is expressed as "3.000000001s". |

optional_media_controls |

array of OptionalMediaControls |

Optional | Opt-in property to receive callbacks when a user changes their media playback status (such as by pausing or stopping media playback). |

media_objects |

array of MediaObject |

Required | Represents the media objects to include in the prompt. When

acknowledging a media status with MEDIA_STATUS_ACK, do not

provide media objects. |

first_media_object_index |

integer | Optional | 0-based index of the first MediaObject in

media_objects to play. If unspecified, zero, or out-of-bounds,

playback starts at the first MediaObject.

|

repeat_mode |

RepeatMode |

Optional | Repeat mode for the list of media objects. |

Sample code

YAML

candidates: - first_simple: variants: - speech: This is a media response. content: media: start_offset: 2.12345s optional_media_controls: - PAUSED - STOPPED media_objects: - name: Media name description: Media description url: 'https://storage.googleapis.com/automotive-media/Jazz_In_Paris.mp3' image: large: url: 'https://storage.googleapis.com/automotive-media/album_art.jpg' alt: Jazz in Paris album art media_type: AUDIO

JSON

{ "candidates": [ { "first_simple": { "variants": [ { "speech": "This is a media response." } ] }, "content": { "media": { "start_offset": "2.12345s", "optional_media_controls": [ "PAUSED", "STOPPED" ], "media_objects": [ { "name": "Media name", "description": "Media description", "url": "https://storage.googleapis.com/automotive-media/Jazz_In_Paris.mp3", "image": { "large": { "url": "https://storage.googleapis.com/automotive-media/album_art.jpg", "alt": "Jazz in Paris album art" } } } ], "media_type": "AUDIO" } } } ] }

Node.js

// Media response app.handle('media', (conv) => { conv.add('This is a media response'); conv.add(new Media({ mediaObjects: [ { name: 'Media name', description: 'Media description', url: 'https://storage.googleapis.com/automotive-media/Jazz_In_Paris.mp3', image: { large: JAZZ_IN_PARIS_IMAGE, } } ], mediaType: 'AUDIO', optionalMediaControls: ['PAUSED', 'STOPPED'], startOffset: '2.12345s' })); });

JSON

{ "session": { "id": "session_id", "params": {}, "languageCode": "" }, "prompt": { "override": false, "content": { "media": { "mediaObjects": [ { "name": "Media name", "description": "Media description", "url": "https://storage.googleapis.com/automotive-media/Jazz_In_Paris.mp3", "image": { "large": { "alt": "Jazz in Paris album art", "height": 0, "url": "https://storage.googleapis.com/automotive-media/album_art.jpg", "width": 0 } } } ], "mediaType": "AUDIO", "optionalMediaControls": [ "PAUSED", "STOPPED" ] } }, "firstSimple": { "speech": "This is a media response", "text": "This is a media response" } } }

Receiving media status

During or after media playback for a user, Google Assistant can generate media status events to inform your Action of playback progress. Handle these status events in your webhook code to appropriately route users when they pause, stop, or finish media playback.

Google Assistant returns a status event from the following list based on media playback progress and user queries:

FINISHED: User completed media playback (or skips to next piece of media) and transition is not to a conversation exit. This status also maps to theMEDIA_STATUS_FINISHEDsystem intent.PAUSED: User paused media playback. Opt in to receiving this status event with theoptional_media_controlsproperty. This status also maps to theMEDIA_STATUS_PAUSEDsystem intent.STOPPED: User stopped or exited media playback. Opt in to receiving this status event with theoptional_media_controlsproperty. This status also maps to theMEDIA_STATUS_STOPPEDsystem intent.FAILED: Media playback failed. This status also maps to theMEDIA_STATUS_FAILEDsystem intent.

During media playback, a user might provide a query that can be interpreted as

both a media pause and stop event (like "stop", "cancel", or "exit"). In that

situation, Assistant provides the actions.intent.CANCEL system intent to your

Action, generates a media status event with the "STOPPED" status value, and

exits your Action completely.

When Assistant generates a media status event with the PAUSED or STOPPED

status value, respond with a media response that contains only an

acknowledgement (of type MEDIA_STATUS_ACK).

Media progress

The current media playback progress is available in the

context.media.progress field for webhook requests. You

can use the media progress as a start time offset to resume playback at the

point where media playback ended. To apply the start time offset to a media

response, use the start_offset property.

Sample code

Node.js

// Media status app.handle('media_status', (conv) => { const mediaStatus = conv.intent.params.MEDIA_STATUS.resolved; switch(mediaStatus) { case 'FINISHED': conv.add('Media has finished playing.'); break; case 'FAILED': conv.add('Media has failed.'); break; case 'PAUSED' || 'STOPPED': if (conv.request.context) { // Persist the media progress value const progress = conv.request.context.media.progress; } // Acknowledge pause/stop conv.add(new Media({ mediaType: 'MEDIA_STATUS_ACK' })); break; default: conv.add('Unknown media status received.'); } });

Return a playlist

You can add more than one audio file in your response to create a playlist. When the first track is finished playing, the next track plays automatically, and this continues until each track has played. Users can also press the Next button on screen, or say "Next" or something similar to skip to the next track.

The Next button is disabled on the last track of the playlist. However, if you enable looping mode, the playlist begins again from the first track. To learn more about looping mode, see Implement looping mode.

To create a playlist, include more than one MediaObject in the media_objects

array. The following code snippet shows a prompt that returns a playlist of

three tracks:

{

"candidates": [

{

"content": {

"media": {

"media_objects": [

{

"name": "1. Jazz in Paris",

"description": "Song 1 of 3",

"url": "https://storage.googleapis.com/automotive-media/Jazz_In_Paris.mp3",

"image": {

"large": {

"url": "https://storage.googleapis.com/automotive-media/album_art.jpg",

"alt": "Album cover of an ocean view",

"height": 1600,

"width": 1056

}

}

},

{

"name": "2. Jazz in Paris",

"description": "Song 2 of 3",

"url": "https://storage.googleapis.com/automotive-media/Jazz_In_Paris.mp3",

"image": {

"large": {

"url": "https://storage.googleapis.com/automotive-media/album_art.jpg",

"alt": "Album cover of an ocean view",

"height": 1600,

"width": 1056

}

}

},

{

"name": "3. Jazz in Paris",

"description": "Song 3 of 3",

"url": "https://storage.googleapis.com/automotive-media/Jazz_In_Paris.mp3",

"image": {

"large": {

"url": "https://storage.googleapis.com/automotive-media/album_art.jpg",

"alt": "Album cover of an ocean view",

"height": 1600,

"width": 1056

}

}

}

],

}

}

}

]

}Implement looping mode

Looping mode allows you to provide an audio response that automatically repeats. You can use this mode to repeat a single track or to loop through a playlist. If the user says "Next" or something similar for a single looped track, the song begins again. For looped playlists, a user saying "Next" starts the next track in the playlist.

To implement looping mode, add the repeat_mode

field to your prompt and set its value to ALL. This addition allows your media

response to loop to the beginning of the first media object when the end of the

last media object is reached.

The following code snippet shows a prompt that returns a looping track:

{

"candidates": [

{

"content": {

"media": {

"media_objects": [

{

"name": "Jazz in Paris",

"description": "Single song (repeated)",

"url": "https://storage.googleapis.com/automotive-media/Jazz_In_Paris.mp3",

"image": {

"large": {

"url": "https://storage.googleapis.com/automotive-media/album_art.jpg",

"alt": "Album cover of an ocean view",

"height": 1600,

"width": 1056

}

}

}

],

"repeat_mode": "ALL"

}

}

}

]

}