Page Summary

-

Interactive Canvas is a Google Assistant framework allowing developers to create immersive visual experiences within Conversational Actions via a full-screen interactive web app.

-

Use Interactive Canvas to create full-screen visuals, custom animations, data visualization, and custom layouts/GUIs in your Action.

-

Interactive Canvas Actions are currently supported on smart displays and Android mobile devices with Google Search App version 9.86 or later.

-

An Interactive Canvas Action consists of a Conversational Action using Actions Builder or SDK and a web app built with web technologies that communicates using the Interactive Canvas API.

-

You can choose between server fulfillment using a webhook or client fulfillment using client-side APIs for building your Interactive Canvas Action's logic.

Interactive Canvas is a framework built on Google Assistant that allows developers to add visual, immersive experiences to Conversational Actions. This visual experience is an interactive web app that Assistant sends as a response to the user in conversation. Unlike rich responses that exist in-line in an Assistant conversation, the Interactive Canvas web app renders as a full-screen web view.

Use Interactive Canvas if you want to do any of the following in your Action:

- Create full-screen visuals

- Create custom animations and transitions

- Do data visualization

- Create custom layouts and GUIs

Supported devices

Interactive Canvas is currently available on the following devices:

- Smart displays

- Android mobile devices

How it works

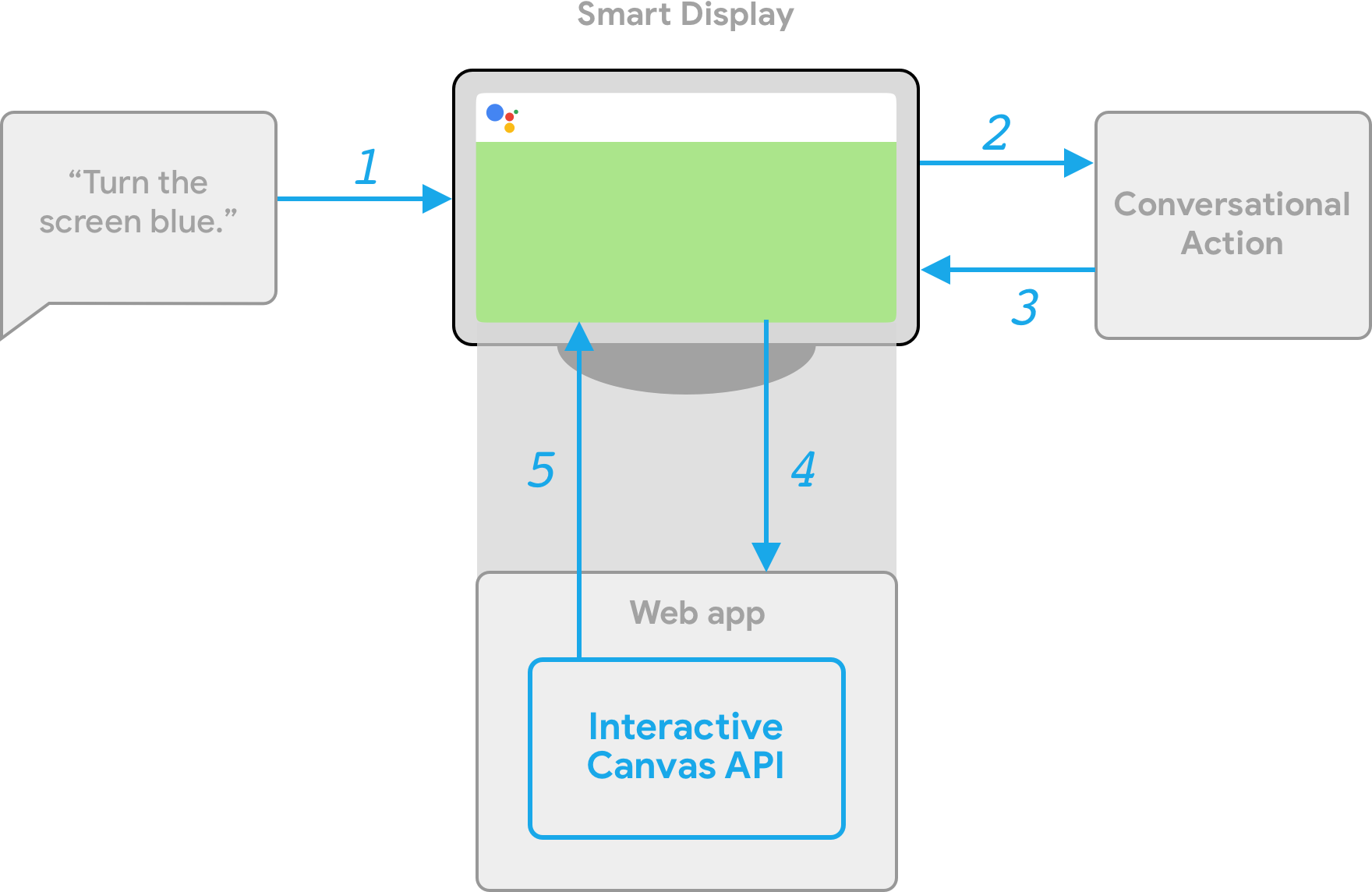

An Action that uses Interactive Canvas consists of two main components:

- Conversational Action: An Action that uses a conversational interface to fulfill user requests. You can use either Actions Builder or the Actions SDK to build your conversation.

- Web app: A front-end web app with customized visuals that your Action sends as a response to users during a conversation. You build the web app with web technologies like HTML, JavaScript, and CSS.

Users interacting with an Interactive Canvas Action have a back-and-forth conversation with Google Assistant to fulfill their goal. However, for Interactive Canvas, the bulk of this conversation occurs within the context of your web app. When connecting your Conversational Action to your web app, you must include the Interactive Canvas API in your web app code.

- Interactive Canvas library: A JavaScript library that you include in the web app to enable communication between the web app and your Conversational Action using an API. For more information, see the Interactive Canvas API documentation.

In addition to including the Interactive Canvas library, you must return the

Canvas response type in your conversation to open your web app on the

user's device. You can also use a Canvas response to update your web app based

on the user's input.

Canvas: A response that contains a URL of the web app and data to pass it. Actions Builder can automatically populate theCanvasresponse with the matched intent and current scene data to update the web app. Alternatively, you can send aCanvasresponse from a webhook using the Node.js fulfillment library. For more information, see Canvas prompts.

To illustrate how Interactive Canvas works, imagine a hypothetical Action called Cool Colors that changes the device screen color to a color the user specifies. After the user invokes the Action, the following flow happens:

- The user says, "Turn the screen blue." to the Assistant device.

- The Actions on Google platform routes the user's request to your conversational logic to match an intent.

- The platform matches the intent with the Action's scene, which triggers an

event and sends a

Canvasresponse to the device. The device loads a web app using a URL provided in the response (if it has not yet been loaded). - When the web app loads, it registers callbacks with the Interactive Canvas API.

If the Canvas response contains a

datafield, the object value of thedatafield is passed into the registeredonUpdatecallback of the web app. In this example, the conversational logic sends aCanvasresponse with a data field that includes a variable with the value ofblue. - Upon receiving the

datavalue of theCanvasresponse, theonUpdatecallback can execute custom logic for your web app and make the defined changes. In this example, theonUpdatecallback reads the color fromdataand turns the screen blue.

Client-side and server-side fulfillment

When building an Interactive Canvas Action, you can choose between two fulfillment implementation paths: server fulfillment or client fulfillment. With server fulfillment, you primarily use APIs that require a webhook. With client fulfillment, you can use client-side APIs and, if needed, APIs that require a webhook for non-Canvas features (such as account linking).

If you choose to build with server webhook fulfillment in the project creation stage, you must deploy a webhook to handle the conversational logic and client-side JavaScript to update the web app and manage communication between the two.

If you choose to build with client fulfillment (currently available in Developer Preview), you can use new client-side APIs to build your Action’s logic exclusively in the web app, which simplifies the development experience, reduces latency between conversational turns, and enables you to use on-device capabilities. If needed, you can also switch to server-side logic from the client.

For more information about client-side capabilities, see Build with client-side fulfillment.

Next steps

To learn how to build a web app for Interactive Canvas, see Web apps.

To see the code for a complete Interactive Canvas Action, see the sample on GitHub.