世界をキャンバスに

世界最大級のクロスデバイス拡張現実プラットフォームを使用して、100 か国以上で世界規模の臨場感あふれるエクスペリエンスを構築。ARCore を使用すると、簡単に統合できるワークフローと、Google マップを介して学習した世界に関する知識を使用して、現実世界とデジタル世界をシームレスに融合できます。

ARCore

ARCore は Google の拡張現実 SDK であり、Android、iOS、Unity、ウェブで新たな没入型体験を構築するためのクロスプラットフォーム API を提供します。人、場所、物事のコンテキストを理解することで、人々が一緒に遊び、買い物、学び、創造し、世界を体験する方法を変革します。

機能

開発プロセスを簡素化し、最新の臨場感あふれるエクスペリエンスを迅速に提供するのに役立つ API とオープンな統合ソリューションをご確認ください。

ARCore の基礎

ARCore は、拡張現実エクスペリエンスを構築するための以下のような基本的なツールを提供します。

- モーション トラッキング: 世界に対する相対的な位置を示します

- アンカー。オブジェクトの位置を経時的に追跡します。

- 環境に関する理解(あらゆる種類の表面のサイズと位置を検出)

- ある地点から複数の地点までの距離を測定できる深度理解度

- 光の推定、環境の平均強度と色補正に関する情報を提供します

Geospatial API

Google ストリートビューでマッピングされた任意のエリアにリモートでコンテンツを添付して、世界規模でよりリッチで堅牢なエクスペリエンスを構築できます。

シーンのセマンティクス

ML モデルを利用して、周囲の状況をより詳しく把握し、一般的なものを取り入れます。

Recording and Playback API

ARCore を使用して、後からライブ録画のように再現できる拡張現実セッションを録画できます。

深度 API

オブジェクトのオクルージョン、没入型、インタラクションを通じてリアルさを加え、環境をより深く理解できるようにします。

永続的な Cloud Anchors

屋内の場所をスキャンして、保存した場所を拡張現実機能で後で使用します。

ストリート ジオメトリ

オブジェクト オクルージョンやアンカー コンテンツについて、建物や地形のジオメトリを操作、可視化、変換します。

注目のパートナー

世界中のデベロッパー、チーム、ブランドが、Google の AR ツールとソリューションをどのように活用し、構築しているかをご確認ください。

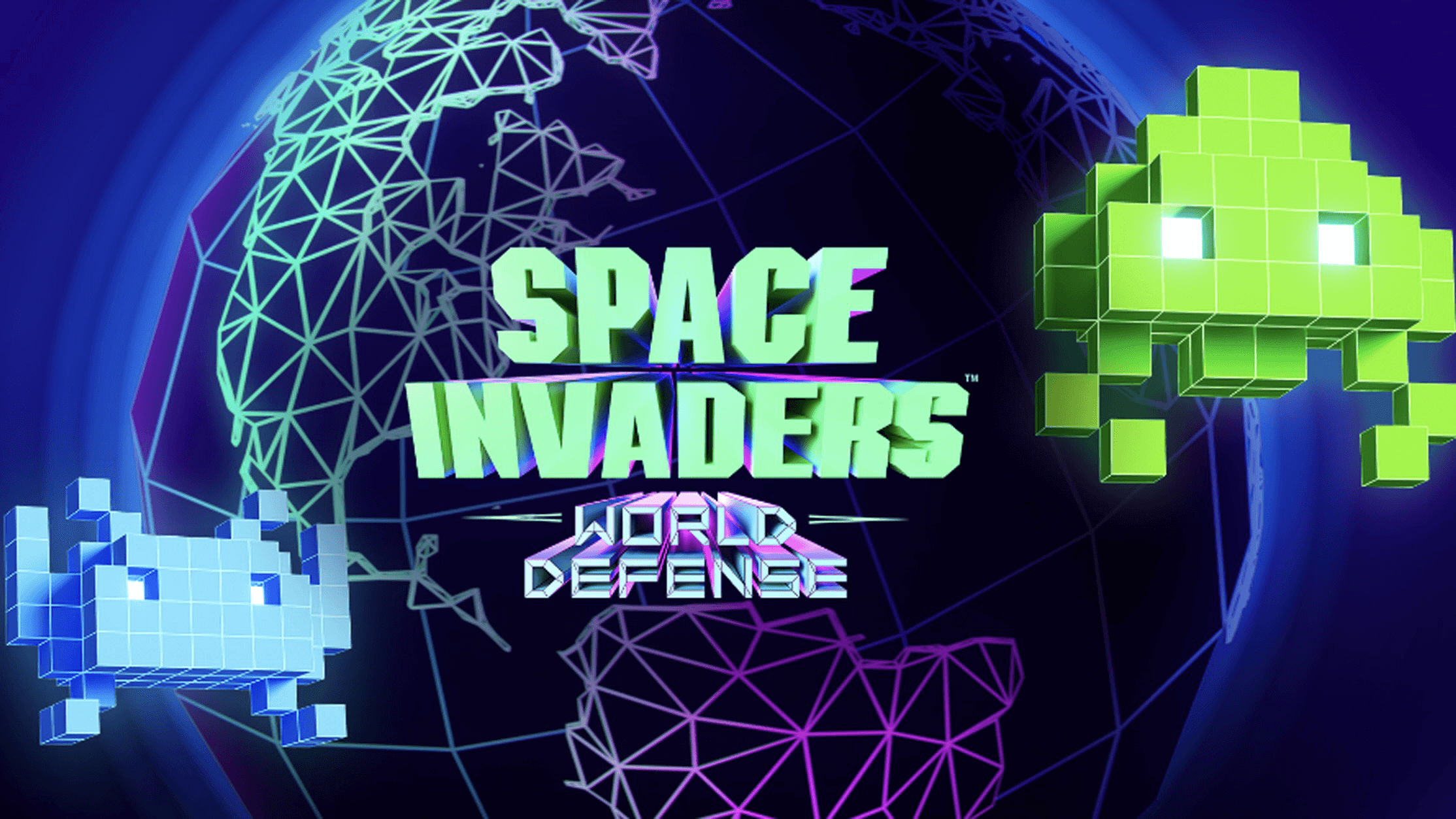

タイトーが『Space INVADERS』の臨場感あふれる AR ゲームで、世界を遊び場に

「Space INVADERS: World Defense」では、パイロット軍の一員として地域を守ります。このゲームのリリースから 45 周年を記念して、

没入型のモニュメントを通して女性の開拓者にスポットライトを当てるスカベンガー

没入感のあるデジタルアートによるストーリーテリング体験を通じて、文化と科学に重要な貢献をした女性のパイオニアをご紹介します。

Gap と Mattel がタイムズ スクエアの Gap 店舗をバービー体験に変え

ニューヨークのショーウィンドウを楽しみましょう。新しい限定版ファッション コレクションをモデルにしたバービーとその友人たちと交流できます。

コミュニティ

ARCore を使用して構築を行っているデベロッパーやクリエイターのコミュニティは拡大を続けています。ぜひご参加ください。

「チーム全体が地理空間 API を試すことに非常に意欲的でした。私たちは 3 年以上にわたり世界規模のエイリアン侵攻ゲームを構想していましたが、適切な技術が利用可能になって最終的に拡張現実が実現するのを待っていました。地理空間情報をチェックして、確認を始めたところ、結果は期待をはるかに上回るものでした。」

ハッカソンの注目の投稿

さまざまな ARCore ハッカソンの過去の受賞者をご覧ください。

ワールド アンサンブル

周囲の環境をタップして、楽器を配置したり、音声や視覚効果を切り替えたり、ビートを変更したりできます。

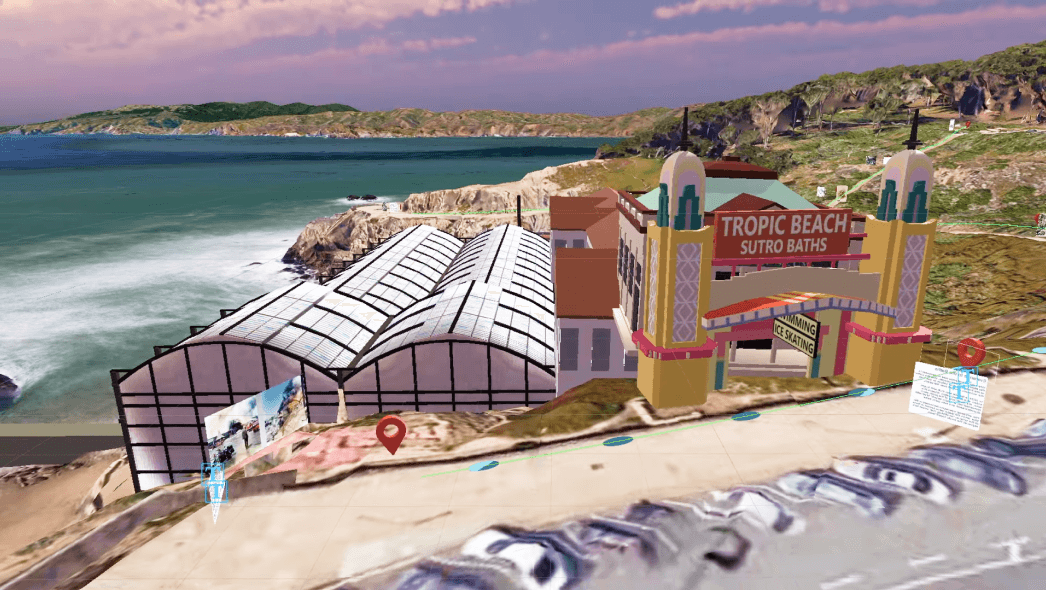

Sutro Baths AR ツアー

サンフランシスコの史跡サトロバスの遺跡で、再建された歴史的建造物を巡りましょう。

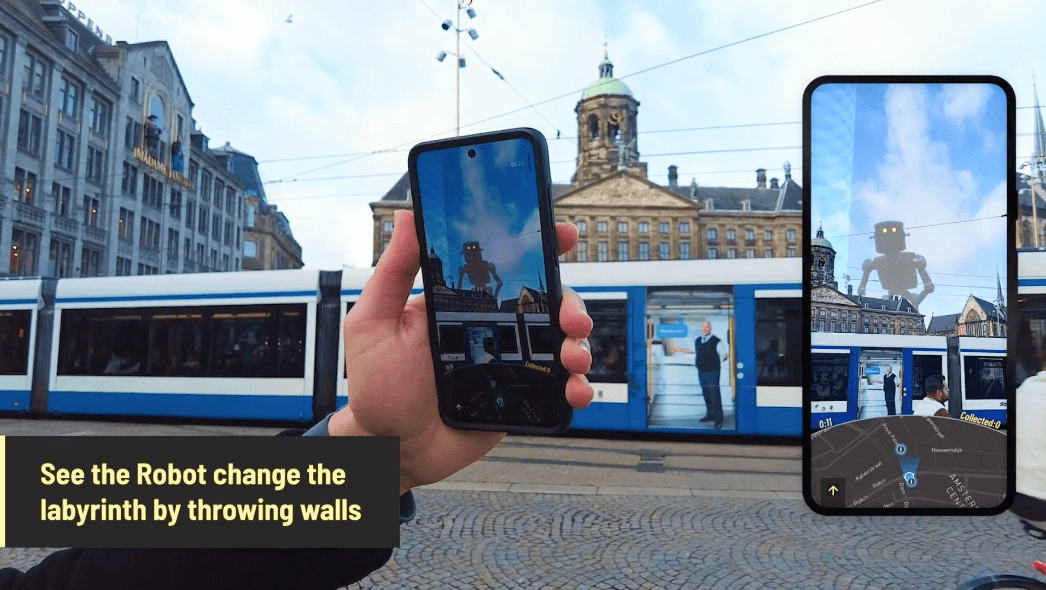

GEOMAZE - アーバン クエスト

街をインタラクティブな迷路に変えて、世界を探索し、地域のランドマークについて学びましょう。

シミー

このプレイ可能なインターフェースでは、MIT のシモンズホールのファサードでノートを再生したり、3D アニメーションを起動したりできます。

Google の Immersive Geospatial Challenge の受賞者の皆様、おめでとうございます

最新のハッカソンの受賞者をご覧ください。エンターテイメントとイベント、コマースなど、5 つのカテゴリで Geospatial Creator を使った応募が掲載されています。

ARCore Geospatial API Challenge の受賞者の皆様、おめでとうございます

前回のハッカソンの受賞者をご覧ください。ゲームとナビゲーションの 5 つのカテゴリで、ARCore Geospatial API を使った応募が掲載されています。

ARCore & Geospatial Creator の最新情報

最新のニュースとイベントを確認できます。

すぐに見る

開発環境を選択します。

Android

Android Studio で Kotlin、Java、C を使用して ARCore を使用し、ネイティブ Android アプリをビルドします。

iOS

ARCore を使用すると、XCode で Objective-C または Swift を使用して ARKit 機能を拡張できます。

Web

WebXR API を活用したオープンウェブ標準を使用して、拡張現実エクスペリエンスを構築します。

Adobe Aero です。

Adobe Aero の Geospatial Creator と Google のフォトリアリスティックな 3D 地図にアクセスできます。

Unity

ARCore Extensions と Geospatial Creator をダウンロードして、クロス プラットフォームの AR エクスペリエンスを作成します。