Page Summary

-

WebP lossless compression is shown to be 23% better than ZopfliPNG and 42% better than libpng.

-

WebP encoding and decoding speeds are similar to PNG, making it a viable alternative.

-

WebP consistently achieves higher compression density than size-optimized PNGs for over 99% of tested web images.

-

WebP offers lossy compression with alpha support, providing further opportunities for website performance improvements.

-

Although WebP encoding may use more memory, decoding generally uses less memory than PNG, particularly with lossy compression.

Jyrki Alakuijala, Ph.D., Google, Inc.

Vincent Rabaud, Ph.D., Google, Inc.

Last updated: 2017-08-01

Abstract -- We compare the resource usage of WebP encoder/decoder to that of PNG in both lossless and lossy modes. We use a corpus of 12000 randomly chosen translucent PNG images from the web, and simpler measurements to show variation in performance. We have recompressed the PNGs in our corpus to compare WebP images to size-optimized PNGs. In our results we show that WebP is a good replacement for PNG for use on the web regarding both size and processing speed.

Introduction

WebP supports lossless and translucent images, making it an alternative to the PNG format. Many of the fundamental techniques used in PNG compression, such as dictionary coding, Huffman coding and color indexing transform are supported in WebP as well, which results in similar speed and compression density in the worst case. At the same time, a number of new features -- such as separate entropy codes for different color channels, 2D locality of backward reference distances, and a color cache of recently used colors -- allow for improved compression density with most images.

In this work, we compare the performance of WebP to PNGs that are highly compressed using pngcrush and ZopfliPNG. We recompressed our reference corpus of web images using best practices, and compared both lossless and lossy WebP compression against this corpus. In addition to the reference corpus, we chose two larger images, one photographic and the other graphical, for speed and memory use benchmarking.

Decoding speeds faster than PNG have been demonstrated, as well as 23% denser compression than what can be achieved using today's PNG format. We conclude that WebP is a more efficient replacement for today's PNG image format. Further, the lossy image compression with lossless alpha support gives further possibilities in speeding up web sites.

Methods

Command line Tools

We use the following command-line tools to measure performance:

cwebp and dwebp. These tools that are part of the libwebp library (compiled from head).

convert. This is a command-line tool part of ImageMagick software (6.7.7-10 2017-07-21).

pngcrush 1.8.12 (Jul 30 2017)

ZopfliPNG (Jul 17th 2017)

We use the command line tools with their respective control flags. For example, if we refer to cwebp -q 1 -m 0, it means that the cwebp tool has been evoked with -q 1 and -m 0 flags.

Image Corpora

Three corpora were chosen:

A single photographic image (Figure 1),

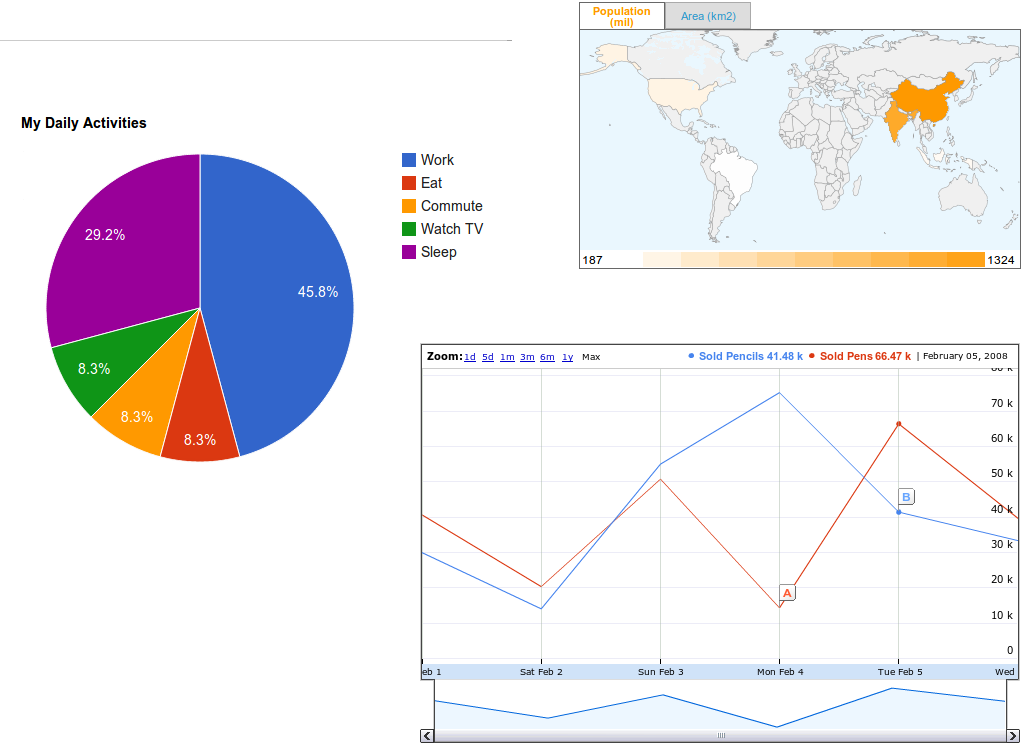

A single graphical image with translucency (Figure 2), and

A web corpus: 12000 randomly chosen PNG images with translucency or not, crawled from the Internet. These PNG images are optimized via convert, pngcrush, ZopfliPNG and the smallest version of each image is considered for the study.

Figure 1. Photographic image, 1024 x 752 pixels. Fire breathing "Jaipur Maharaja Brass Band" Chassepierre Belgium, Author: Luc Viatour, Photo licensed under the Creative Commons Attribution-Share Alike 3.0 Unported license. Author website is here.

Figure 2. Graphical image, 1024 x 752 pixels. Collage images from Google Chart Tools

To measure the full capability of the existing format, PNG, we have recompressed all these original PNG images using several methods:

Clamp to 8 bits per component: convert input.png -depth 8 output.png

ImageMagick(1) with no predictors: convert input.png -quality 90 output-candidate.png

ImageMagick with adaptive predictors: convert input.png -quality 95 output-candidate.png

Pngcrush(2): pngcrush -brute -rem tEXt -rem tIME -rem iTXt -rem zTXt -rem gAMA -rem cHRM -rem iCCP -rem sRGB -rem alla -rem text input.png output-candidate.png

ZopfliPNG(3): zopflipng --lossy_transparent input.png output-candidate.png

ZopfliPNG with all filters: zopflipng --iterations=500 --filters=01234mepb --lossy_8bit --lossy_transparent input.png output-candidate.png

Results

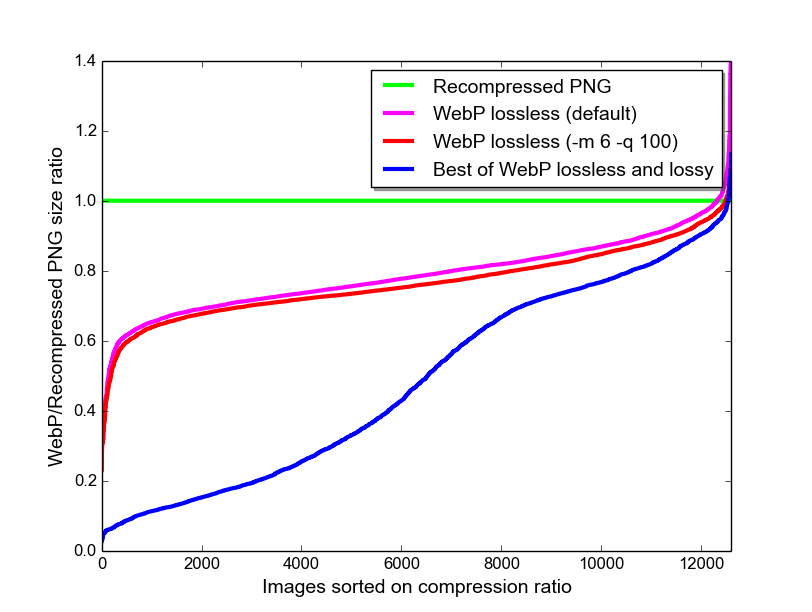

We computed compression density for each of the images in the web corpus, relative to optimized PNG image sizes for three methods:

WebP lossless (default settings)

WebP lossless with the smallest size (-m 6 -q 100)

best of WebP lossless and WebP lossy with alpha (default settings).

We sorted these compression factors, and plotted them in Figure 3.

Figure 3. PNG compression density is used as a reference, at 1.0. The same images are compressed using both lossless and lossy methods. For each image, the size ratio to compressed PNG is computed, and the size ratios are sorted, and shown for both lossless and lossy compression. For the lossy compression curve, the lossless compression is chosen in those cases where it produces a smaller WebP image.

WebP goes beyond PNG compression density for both libpng at maximum quality (convert) as well as ZopfliPNG (Table 1), with encoding (Table 2), and decoding (Table 3) speeds being roughly comparable to those of PNG.

Table 1. Average bits-per-pixel for the three corpora using the different compression methods.

| Image Set | convert -quality 95 | ZopfliPNG | WebP lossless -q 0 -m 1 | WebP lossless (default settings) | WebP lossless -m 6 -q 100 | WebP lossy with alpha |

|---|---|---|---|---|---|---|

| photo | 12.3 | 12.2 | 10.5 | 10.1 | 9.83 | 0.81 |

| graphic | 1.36 | 1.05 | 0.88 | 0.71 | 0.70 | 0.51 |

| web | 6.85 | 5.05 | 4.42 | 4.04 | 3.96 | 1.92 |

Table 2. Average encoding time for the compression corpora and for different compression methods.

| Image Set | convert -quality 95 | ZopfliPNG | WebP lossless -q 0 -m 1 | WebP lossless (default settings) | WebP lossless -m 6 -q 100 | WebP lossy with alpha |

|---|---|---|---|---|---|---|

| photo | 0.500 s | 8.7 s | 0.293 s | 0.780 s | 8.440 s | 0.111 s |

| graphic | 0.179 s | 14.0 s | 0.065 s | 0.140 s | 3.510 s | 0.184 s |

| web | 0.040 s | 1.55 s | 0.017 s | 0.072 s | 2.454 s | 0.020 s |

Table 3. Average decoding time for the three corpora for image files that are compressed with different methods and settings.

| Image Set | convert -quality 95 | ZopfliPNG | WebP lossless -q 0 -m 1 | WebP lossless (default settings) | WebP lossless -m 6 -q 100 | WebP lossy with alpha |

|---|---|---|---|---|---|---|

| photo | 0.027 s | 0.026 s | 0.027 s | 0.026 s | 0.027 | 0.012 s |

| graphics | 0.049 s | 0.015 s | 0.005 s | 0.005 s | 0.003 | 0.010 s |

| web | 0.007 s | 0.005 s | 0.003 s | 0.003 s | 0.003 | 0.003 s |

Memory Profiling

For the memory profiling, we recorded the maximum resident set size as reported by /usr/bin/time -v

For the web corpus, the size of the largest image alone defines the maximal memory use. To keep the memory measurement better defined we use a single photographic image (Figure 1) to give an overview of memory use. The graphical image gives similar results.

We measured 10 to 19 MiB for libpng and ZopfliPNG, and 25 MiB and 32 MiB for WebP lossless encoding at settings -q 0 -m 1, and -q 95 (with a default value of -m), respectively.

In a decoding experiment, convert -resize 1x1 uses 10 MiB for both the libpng and ZopfliPNG generated PNG files. Using cwebp, WebP lossless decoding uses 7 MiB, and lossy decoding 3 MiB.

Conclusions

We have shown that both encoding and decoding speeds are in the same domain of those of PNG. There is an increase in the memory use during the encoding phase, but the decoding phase shows a healthy decrease, at least when comparing the behavior of cwebp to that of ImageMagick's convert.

The compression density is better for more than 99% of the web images, suggesting that one can relatively easily change from PNG to WebP.

When WebP is run with default settings, it compresses 42% better than libpng, and 23% better than ZopfliPNG. This suggests that WebP is promising for speeding up image heavy websites.

References

External Links

The following are independent studies not sponsored by Google, and Google doesn't necessarily stand behind the correctness of all their contents.