Convierte el mundo en tu lienzo

Crea experiencias inmersivas a escala mundial en más de 100 países con la plataforma de realidad aumentada multidispositivo más grande. ARCore te permite combinar fácilmente el mundo físico con el digital usando flujos de trabajo fáciles de integrar y la comprensión que aprendimos del mundo a través de Google Maps.

ARCore

Atributos

Aspectos básicos de ARCore

- Seguimiento de movimiento, que muestra posiciones relativas al mundo

- Presentadores, que garantizan el seguimiento de la posición de un objeto a lo largo del tiempo

- Comprensión ambiental, que detecta el tamaño y la ubicación de todo tipo de superficies

- Comprensión de profundidad, que mide la distancia entre las superficies desde un punto determinado

- Estimación de luz, que proporciona información sobre la intensidad promedio y la corrección de color del entorno

API de Geospatial

Semántica de escenas

API de Recording y Playback

API de Depth

Cloud Anchors persistentes

Geometría de Streetscape

Socios destacados

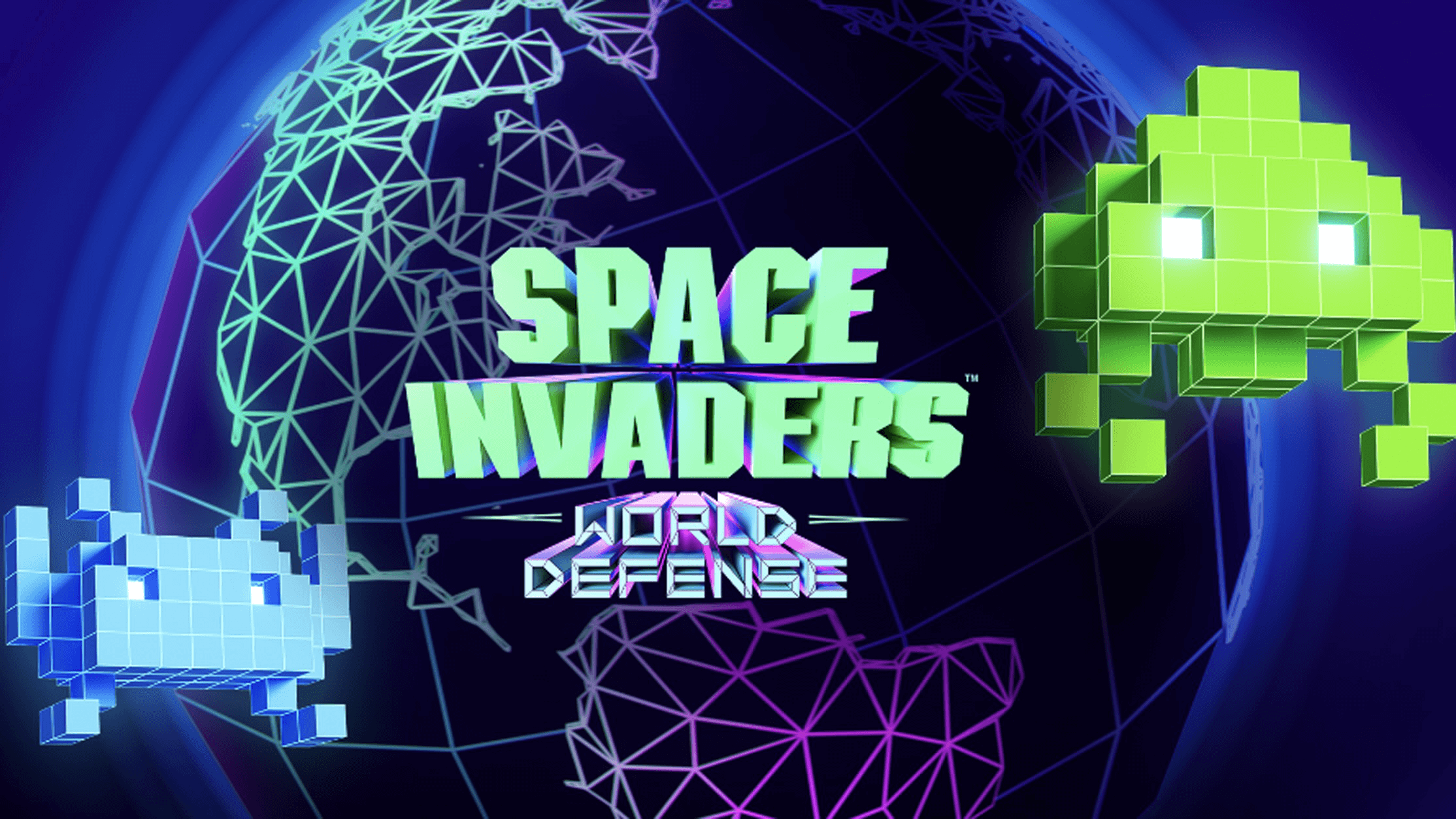

TAITO convierte el mundo en un parque infantil con el juego inmersivo de RA de SPACE INVADERS

Scavengar destaca a las pioneras mediante monumentos inmersivos

Gap y Mattel transforman la tienda Gap de Times Square en una experiencia de Barbie

Nuestra comunidad

"Todo el equipo estaba muy entusiasmado por probar la API geoespacial. [Tuvimos] la idea de crear un juego de invasión extraterrestre a escala mundial por más de 3 años, y esperábamos que la tecnología adecuada estuviera disponible para, finalmente, comenzarla a ser realidad... realidad aumentada. Y cuando comenzamos a verificar las ubicaciones geoespaciales, los resultados superaron nuestras expectativas más ambiciosas".

Envíos destacados de hackatones

Ensamble mundial

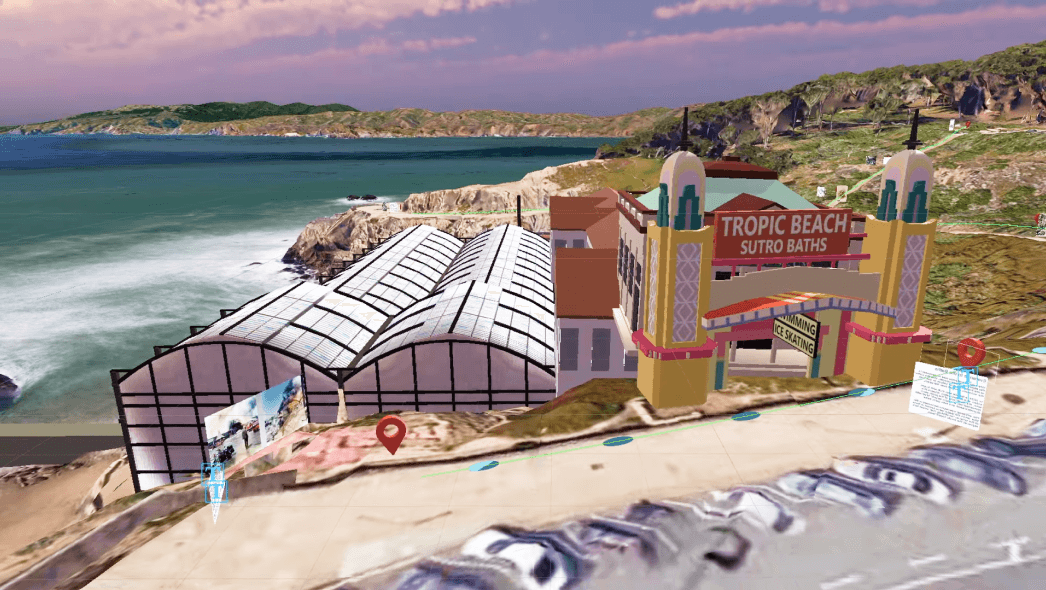

Recorrido con RA en Sutro Baths

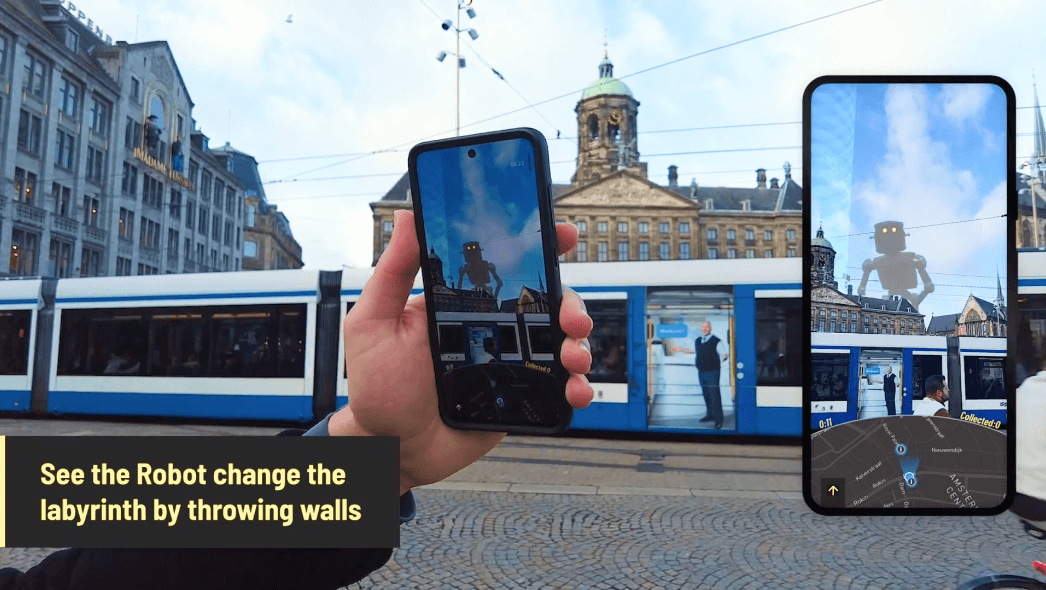

GEOMAZE: The Urban Quest

Simmy

Felicitaciones a los ganadores del Immersive Geospatial Challenge de Google

Conoce a los ganadores de nuestro hackatón más reciente, en el que se realizaron entregas con el Creador de Geospatial en cinco categorías diferentes, como Entretenimiento y eventos, y Comercio.

Felicitaciones a los ganadores del desafío de la API de ARCore Geospatial

Conoce a los ganadores de nuestro hackatón anterior con presentaciones que usaron la API de ARCore Geospatial en cinco categorías diferentes, incluidos videojuegos y navegación.

Novedades del creador de ARCore y Geospatial

ENTRADA DE BLOG DESTACADA

Cómo creamos SPACE INVADERS: World Defense

Descubre cómo se usó la API de ARCore Geospatial en el juego SPACE INVADERS: World Defense para hacer del mundo tu área de juegos. Descubre cómo la API de Streetscape Geometry y el Creador de Geospatial ayudaron a crear un juego de realidad aumentada envolvente.