1. Before you begin

In the previous codelab, you built a basic app for audio classification.

What if you want to customize the audio classification model to recognize audio from different classes not present on a pre-trained model? Or what if you want to customize the model using your own data?

In this Codelab, you will customize a pre-trained Audio Classification model to detect bird sounds. The same technique can be replicated using your own data.

Prerequisites

This codelab has been designed for experienced mobile developers who want to gain experience with Machine Learning. You should be familiar with:

- Android development using Kotlin and Android Studio

- Basic Python syntax

What you'll learn

- How to do transfer learning for the audio domain

- How to create your own data

- How to deploy your own model on an Android app

What you'll need

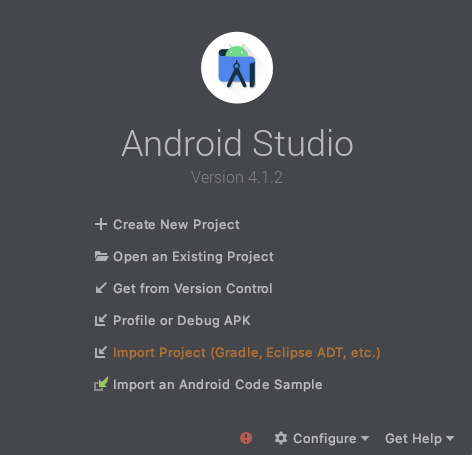

- A recent version of Android Studio (v4.1.2+)

- Physical Android device with Android version at API 23 (Android 6.0)

- The sample code

- Basic knowledge of Android development in Kotlin

2. The Birds dataset

You will use a Birdsong dataset that is already prepared to make it easier to use. All the audio files come from the Xeno-canto website.

This dataset contains songs from:

Name: House Sparrow | Code: houspa |

| |

Name: Red Crossbill | Code: redcro |

| |

Name: White-breasted Wood-Wren | Code: wbwwre1 |

| |

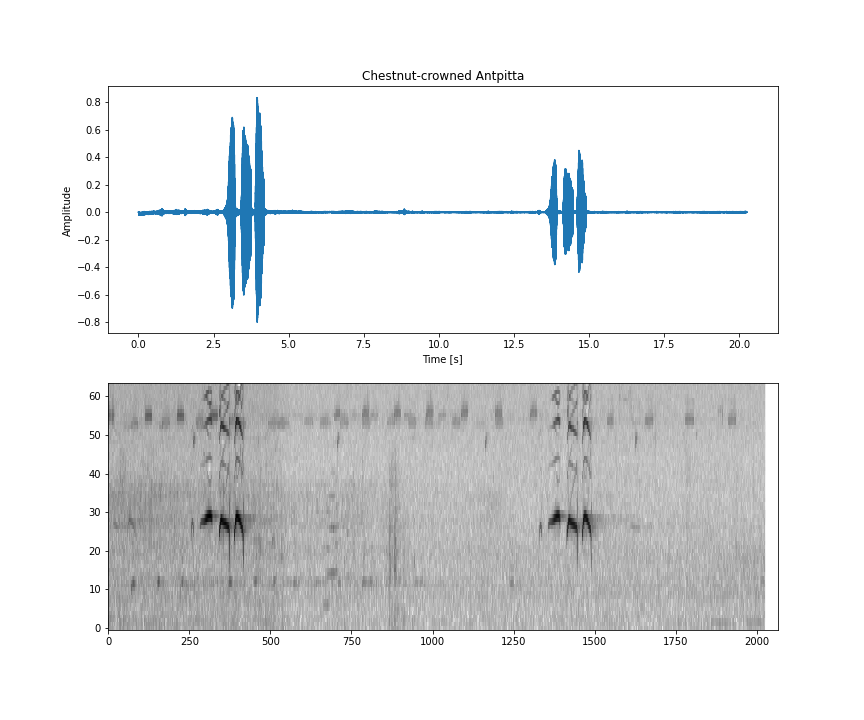

Name: Chestnut-crowned Antpitta | Code: chcant2 |

| |

Name: Azara's Spinetail | Code: azaspi1 |

|

This dataset is in a zip file and its contents are:

- A

metadata.csvthat has all the information about each audio file, such as who recorded the audio, where it was recorded, license of use, and name of the bird. - A train and test folder.

- Inside the train/test folders, there's a folder for each bird code. Inside each one of them there are all the .wav files for that bird on that split.

The audio files are all in the wav format and follow this specification:

- 16000 Hz sample rate

- 1 audio channel (mono)

- 16 bit rate

This specification is important because you will use a base model that expects data in this format. To learn more about it, you can read additional information on this blog post.

To make the whole process easier, you won't need to download the dataset on your machine, you'll use it on Google Colab (later in this guide).

If you want to use your own data, all your audio files should be in this specific format too.

3. Get the sample code

Download the Code

Click the following link to download all the code for this codelab:

Or if you prefer, clone the repository:

git clone https://github.com/googlecodelabs/odml-pathways.git

Unpack the downloaded zip file. This will unpack a root folder (odml-pathways) with all of the resources you will need. For this codelab, you will only need the sources in the audio_classification/codelab2/android subdirectory.

The android subdirectory in the audio_classification/codelab2/android repository contains two directories:

starter—Starting code that you build upon for this codelab.

starter—Starting code that you build upon for this codelab. final—Completed code for the finished sample app.

final—Completed code for the finished sample app.

Import the starter app

Start by importing the starter app into Android Studio:

- Open Android Studio and select Import Project (Gradle, Eclipse ADT, etc.)

- Open the

starterfolder (audio_classification/codelab2/android/starter) from the source code you downloaded earlier.

To be sure that all dependencies are available to your app, you should sync your project with gradle files when the import process has finished.

- Select Sync Project with Gradle Files (

) from the Android Studio toolbar.

) from the Android Studio toolbar.

4. Understand the starter app

This app is the same that was built in the first codelab for audio classification: Create a basic app for audio classification.

To gain a better understanding of the code in detail, it's recommended that you do that codelab before continuing.

All the code is in the MainActivity (to keep as simple as possible).

In summary, the code goes over the tasks of:

- Loading the model

val classifier = AudioClassifier.createFromFile(this, modelPath)

- Creating the audio recorder and beginning the recording

val tensor = classifier.createInputTensorAudio()

val format = classifier.requiredTensorAudioFormat

val recorderSpecs = "Number Of Channels: ${format.channels}\n" +

"Sample Rate: ${format.sampleRate}"

recorderSpecsTextView.text = recorderSpecs

val record = classifier.createAudioRecord()

record.startRecording()

- Creating a timer thread to run inference. The parameters for the method

scheduleAtFixedRateare how long it will wait to start execution and the time between successive task execution. In the code below, it will start in 1 millisecond and will run again every 500 milliseconds.

Timer().scheduleAtFixedRate(1, 500) {

...

}

- Running the inference on the captured audio

val numberOfSamples = tensor.load(record)

val output = classifier.classify(tensor)

- Filter classification for low scores

val filteredModelOutput = output[0].categories.filter {

it.score > probabilityThreshold

}

- Show the results on the screen

val outputStr = filteredModelOutput.map { "${it.label} -> ${it.score} " }

.joinToString(separator = "\n")

runOnUiThread {

textView.text = outputStr

}

You can now run the app and play with it as is, but remember that it's using a more generic pre-trained model.

5. Train a custom Audio Classification model with Model Maker

In the previous step, you downloaded an app that uses a pre-trained model to classify audio events. But sometimes you need to customize this model to audio events that you're interested in or make it a more specialized version.

As mentioned before, you'll specialize the model for Bird sounds. Here is a dataset with bird audios, curated from the Xeno-canto website.

Colaboratory

Next, let's go to Google Colab to train the custom model.

It will take about 30 minutes to train the custom model.

If you want to skip this step, you can download the model that you'd have trained on the colab with the provided dataset and proceed to the next step

6. Add the custom TFLite model to the Android app

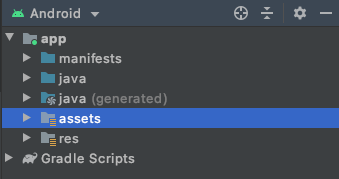

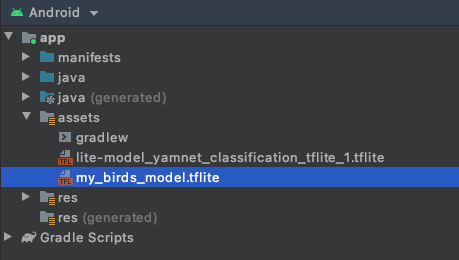

Now that you have trained your own audio classification model and saved it locally, you need to place it in the assets folder of the Android app.

The first step is to move the downloaded model from the previous step to the assets folder in your app.

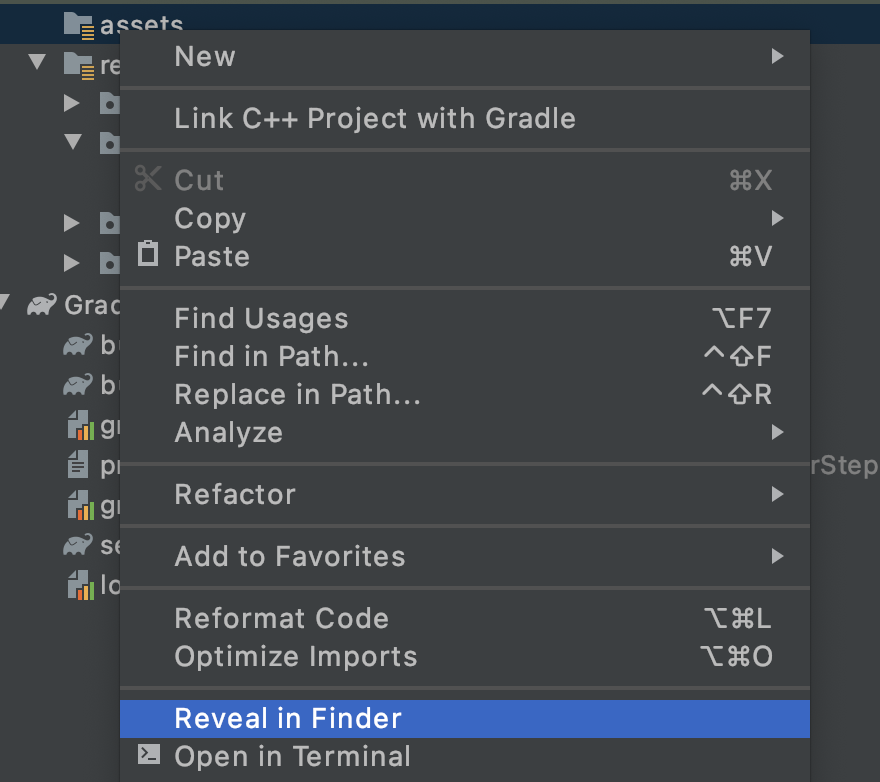

- In Android Studio, with the Android Project view, right-click the assets folder.

- You'll see a popup with a list of options. One of these will be to open the folder in your file system. Find the appropriate one for your operating system and select it. On a Mac this will be Reveal in Finder, on Windows it will be Open in Explorer, and on Ubuntu it will be Show in Files.

- Copy the downloaded model into the folder.

Once you've done this, go back to Android Studio, and you should see your file within the assets folder.

7. Load the new model on the base app

The base app already uses a pre-trained model. You'll replace it with the one you've just trained.

- TODO 1: To load the new model after adding it to the assets folder, change the value of the

modelPathvariable:

var modelPath = "my_birds_model.tflite"

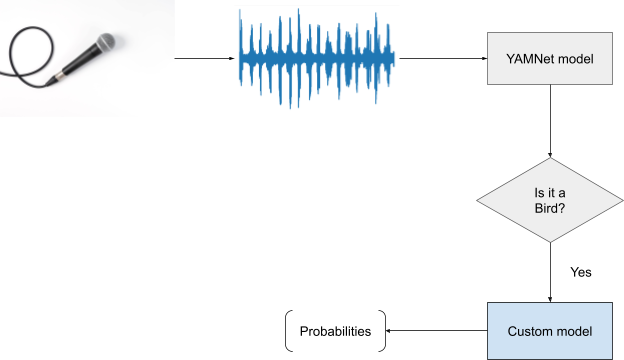

The new model has two outputs (heads):

- The original, more generic output from the base model you used, in this case YAMNet.

- The secondary output that is specific for the birds you've used on training.

This is necessary because YAMNet does a great job recognizing multiple classes that are common, like Silence for example. With this you don't have to worry about all the other classes that you didn't add to your dataset.

What you'll do now is, if the YAMNet classification shows a high score for the bird class, then you'll look into which bird is it in the other output.

- TODO 2: Read if the first head of classification has a high confidence it's a Bird sound. Here you will change the filtering to also filter out anything that is not Bird:

val filteredModelOuput = output[0].categories.filter {

it.label.contains("Bird") && it.score > .3

}

- TODO 3: If the base head of the model detects that there was a bird in the audio with a good probability, you will get which one is it on the second head:

if (filteredModelOutput.isNotEmpty()) {

Log.i("Yamnet", "bird sound detected!")

filteredModelOutput = output[1].categories.filter {

it.score > probabilityThreshold

}

}

And that's it. Changing the model to use the one you've just trained is simple.

The next step is testing it.

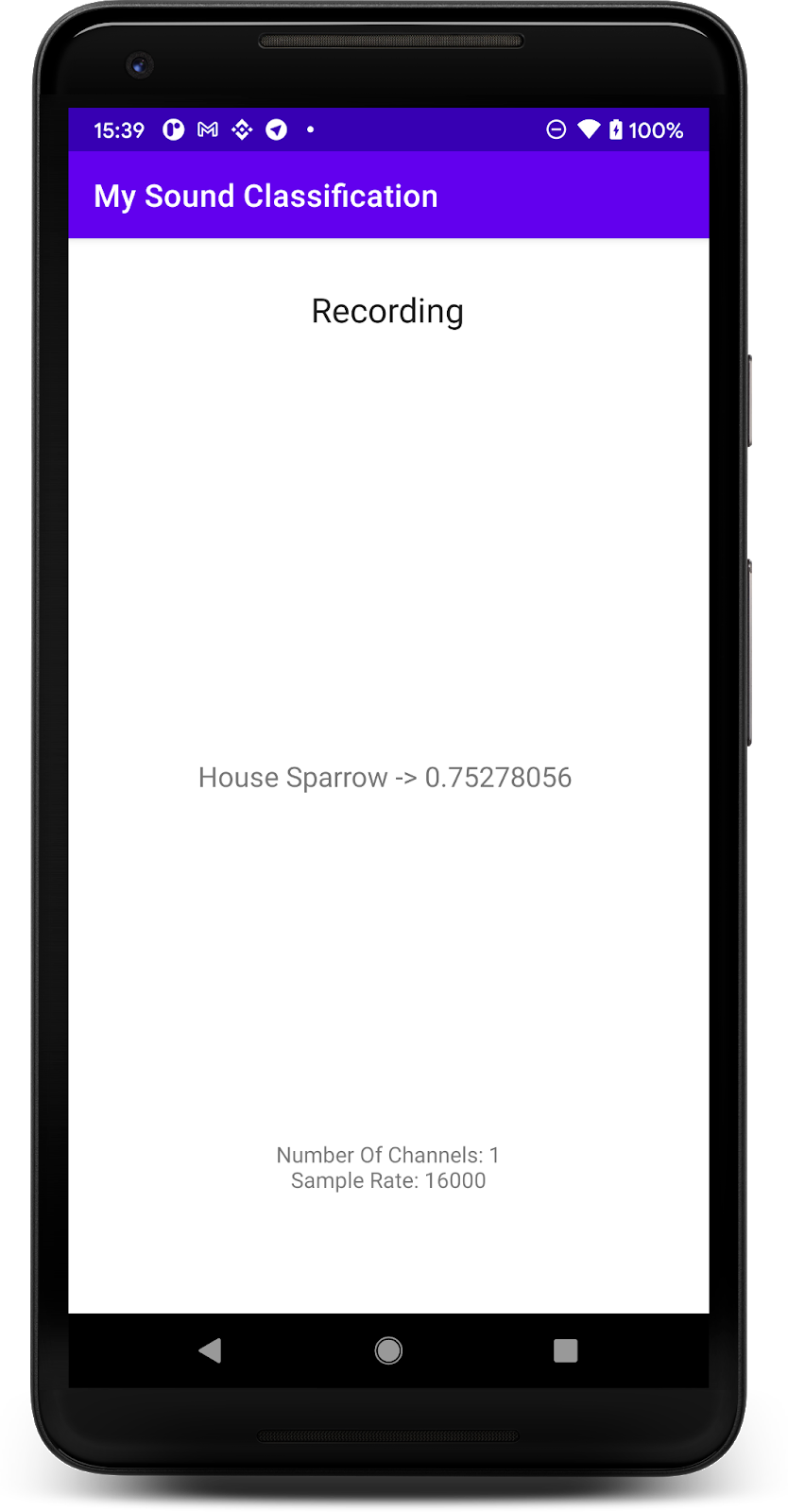

8. Test the app with your new model

You have integrated your audio classification model to the app, so let's test it.

- Connect your Android device, and click Run (

) in the Android Studio toolbar.

) in the Android Studio toolbar.

The app should be able to correctly predict the audio of the birds. To make it easier for testing, just play one of the audios from your computer (from previous steps), and your phone should be able to detect it. When it does, it will show on the screen the birds name and the probability of it being correct.

9. Congratulations

In this codelab, you learned how to create your own audio classification model with Model Maker and deploy it to your mobile app using TensorFlow Lite. To learn more about TFLite, take a look at other TFLite samples.

What we've covered

- How to prepare your own dataset

- How to do Transfer Learning for Audio Classification with Model Maker

- How to use your model on an Android App

Next Steps

- Try with your own data

- Share with us what you build

Learn More

- Link to learning path

- TensorFlow Lite documentation

- Model Maker documentation

- TensorFlow Hub documentation

- On-device Machine Learning with Google technologies