Lighthouse 如何計算你的整體效能分數

一般而言,只有metrics會計入 Lighthouse 效能分數,而非「商機」或「診斷」的結果。也就是說,改善商機和診斷可能有助於改善指標值,因此建立間接關係。

以下概要說明分數出現波動的原因、分數的組成方式,以及 Lighthouse 對每項指標的分數方式。

分數波動的原因

整體成效分數和指標值的變化不受 Lighthouse 影響,成效分數出現波動通常是因為基礎條件變動所導致。常見問題包括:

- 對廣告放送中的廣告進行 A/B 版本測試或變更

- 網際網路流量轉送變更

- 在各種裝置上測試,例如高效能桌上型電腦和低效能筆記型電腦

- 插入 JavaScript 及新增/修改網路要求的瀏覽器擴充功能

- 防毒軟體

Lighthouse 的變化版本說明文件更深入地說明這一點。

此外,雖然 Lighthouse 可提供單一的整體效能分數,但建議您將網站效能視為分數分佈情形,而非使用單一數值,如要瞭解原因,請參閱以使用者為中心的效能指標簡介。

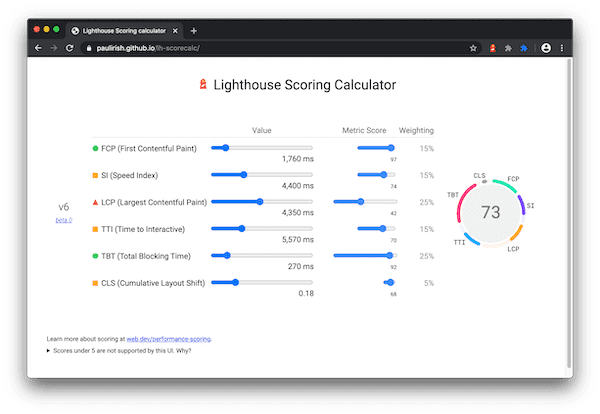

成效分數的加權方式

成效分數是指標分數的加權平均值。自然來說,權重較高的指標會對整體成效分數產生較大的影響。指標分數不會顯示在報表中,但會以背景的方式計算。

Lighthouse 10

| 稽核 | 重量 |

|---|---|

| 首次顯示內容所需時間 | 10% |

| 速度指數 | 10% |

| 最大內容繪製 | 25% |

| 總封鎖時間 | 30% |

| 累計版面配置位移 | 25% |

燈塔 8

指標分數的判定方式

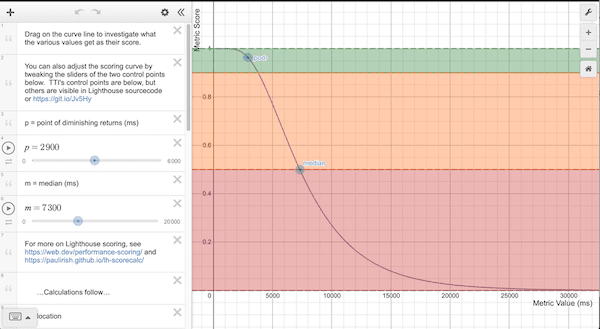

Lighthouse 收集到成效指標後 (大多回報以毫秒為單位),查看指標值在 Lighthouse 評分分佈情形中的位置,將每個原始指標值轉換為 0 到 100 的指標分數。評分分佈是對記錄常態分佈,衍生自 HTTP 封存中實際網站效能資料的效能指標。

舉例來說,「最大內容繪製」(LCP) 是指使用者感知到網頁最大內容的時機。LCP 指標值代表從使用者啟動網頁載入到轉譯主要內容之間的時間長度。根據實際的網站資料,效能最佳的網站會在約 1,220 毫秒內轉譯 LCP,因此指標值會對應至 99 分。

進一步來說,Lighthouse 評分曲線模型會使用 HTTPArchive 資料找出兩個控制點,然後設定 log-normal 曲線的形狀。HTTP 封存資料的第 25 個百分位數會變成 50 分 (中位數控制點),第 8 個百分位數的分數變成 90 (良好/綠色控制點)。探索下方的評分曲線時,請注意,0.50 和 0.92 之間,指標值和分數之間呈現近線性的關係。如果分數為 0.96,則代表「投資報酬率下降點」(如上所述),曲線會消失,需要更多指標改善才能改善已經高分。

電腦和行動裝置的處理方式

如上所述,分數曲線是根據實際成效資料計算而來。在 Lighthouse 第 6 版之前,所有分數曲線都是以行動裝置成效資料為基礎,但桌機 Lighthouse 執行會使用該資料。在實務上,這會讓電腦分數以人為方式浮報。Lighthouse v6 會使用特定的桌面分數修正這項錯誤。 雖然您可以預期成效分數的整體變化將從 5 分到 6 分,但電腦版分數會有明顯差異。

系統如何以不同顏色標示分數

指標分數和成效分數會根據以下範圍標上顏色:

- 0 到 49 (紅色):不好

- 50 到 89 (橘色):需要改善

- 90 至 100 (綠色):良好

為了提供良好的使用者體驗,網站應盡力維持良好的分數 (90-100)。100 分表示「完美」非常困難,而且難以達成。 例如,如果分數介於 99 至 100 分,需要的指標提升量大概要 90 到 94。

開發人員該如何提高效能分數?

首先,請使用 Lighthouse 評分計算工具,瞭解您達成特定 Lighthouse 效能分數的目標門檻。

Lighthouse 報表中「商機」一節會提供詳細的建議和實作說明文件,此外,「診斷」專區也會列出其他指引,讓開發人員可以進一步探究,進一步改善效能。